We’re embarking on a major revamp of our CPU benchmark hierarchy and Bench data. Testing is already underway, but over the next several weeks, we’ll be testing every CPU we have access to in order to create a comprehensive dataset that spans a little over a decade of CPU releases. And we want to give Tom’s Hardware Premium readers a peek behind the curtain as we start this journey, just as we did last week with our GPU test plan.

Below, we’re going to run through all of the games and apps we’re using to test, take a look at our test bench, and expand on the logic that went into building our test suite. Unlike GPUs, where we’re primarily concerned with gaming performance, our CPU test suite is extremely varied. Broadly, we have productivity, gaming, and power/efficiency benchmarks, but there are dozens of different types of workloads within each category.

As we look out over the next 12 months, both Intel and AMD should have new CPUs available. Despite some rumors to the contrary, AMD and Intel have both confirmed that next-gen Zen 6 and Nova Lake are on-track for the end of the year. Building our database now lets us get a ten-thousand-foot view of performance over at least half a dozen generations of CPUs as we prepare for what’s coming next.

Although we’re at the starting line now, the completion of this retest isn’t the finish line. Testing is always ongoing here at Tom’s Hardware, especially for CPUs and GPUs. To that end, our testing will continue to evolve. Here, we’re setting a foundation on which to compare a massive list of CPUs that we can continue to build off of as new generations are released.

The test plan — and the benchnarks we’re using

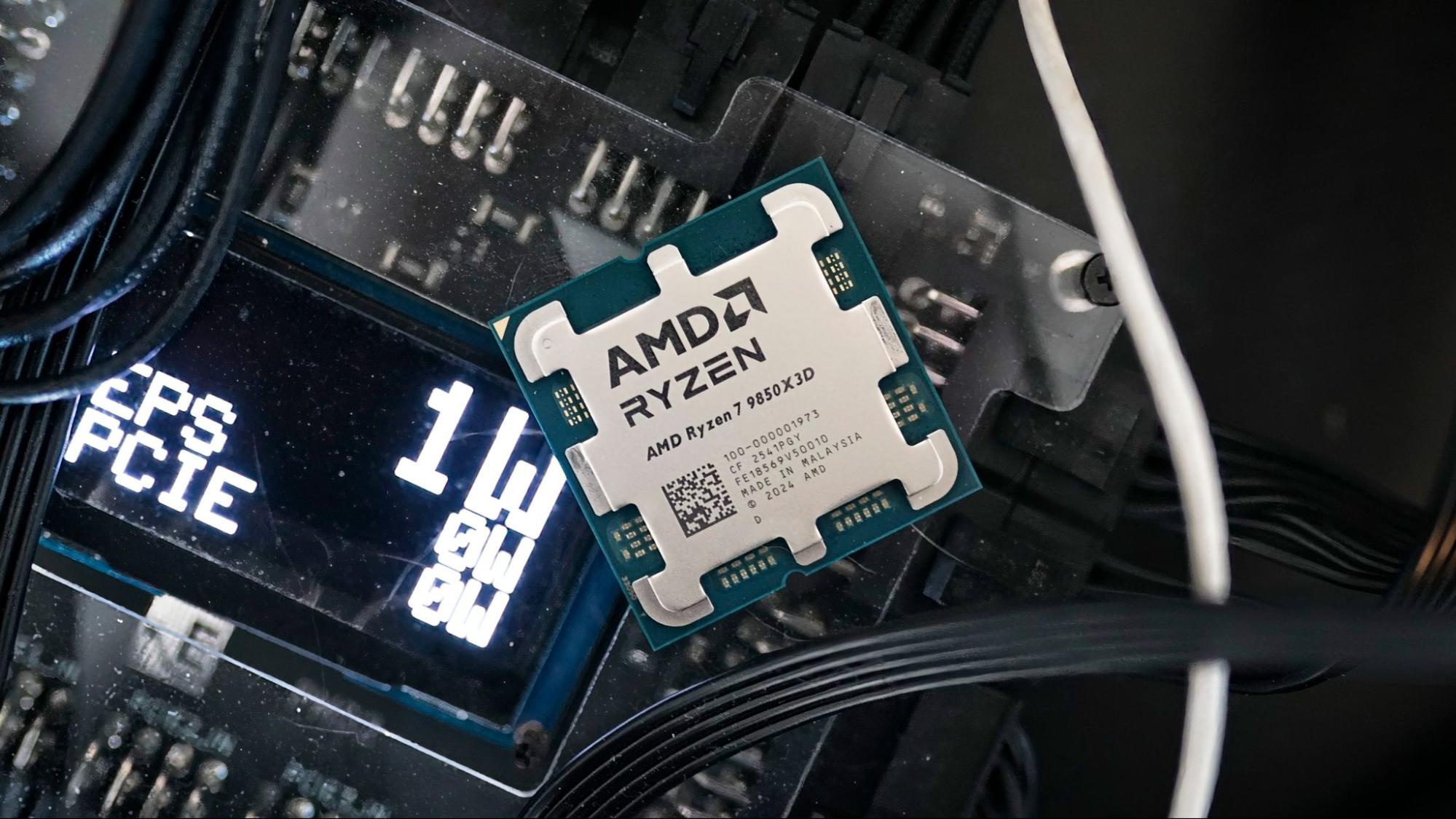

It can’t start anywhere else but the hardware. The current plan is to test everything from AMD Excavator and Intel 7th-gen to present, which would be Zen 5 for AMD and Arrow Lake for Intel. We may go back another generation, but we’re not looking at any DDR3 platforms — we can all agree that even some of the early DDR4 platforms are already pushing it from a relevance standpoint.

In order to tackle literally hundreds of CPUs, we have two people testing, myself and former CPU reviewer turned Editor-in-Chief, Paul Alcorn, and anywhere from two to six systems running at once. Naturally, it’s difficult to synchronise everything when we’re looking at such a broad range of hardware. Keeping everything in check are two golden OS images, which we freeze and duplicate across machines. We have an image for AMD and an image for Intel, and they never cross paths. The images are nearly identical, but they’re built from scratch, so nothing from the AMD image touches the Intel image, or vice versa.

Without revealing all our secrets, these images are frozen in time. There aren’t any updates to them unless we manually make an update. Everything from the drivers to what services are running in the background is identical, to give us comparable results every time. We can replicate these images at will, enabling us to always remain at our true ground truth test conditions.

Although we are testing hundreds of chips, the emphasis is on newer CPUs. They are what matter most to most people, so that data is going to get priority as we go through this process. If you see older data lag behind newer data, that’s why. If we have to prioritize, we will, and the priority is on CPUs that are the most relevant to machines today.

There are a few key pieces of hardware that span all of our rigs to keep testing consistent:

|

Storage |

Sabrent Rocket 4 Plus 2TB |

|

CPU Cooler |

Corsair iCue Link H150i RGB (100% pump/fan speed) |

|

GPU |

RTX 2080 Super FE (Apps), RTX 5090 FE (Games) |

|

Thermal Material |

Arctic MX-4 |

Consistency is key. We have 12 identical Sabrent SSDs and six identical Core iCue Link H150I RGB coolers, with the latter ensuring that we have consistent thermal data from each and every CPU tested.

The only thing that changes between test benches is the CPUs and (when necessary) the motherboard. However, we keep the motherboard and its settings consistent for all chips on that platform. Here are the boards we’re using:

|

AMD AM5 (Ryzen 7000, 9000) |

MSI MPG X870E Carbon Wi-Fi |

|

Intel LGA1851 (Arrow Lake) |

ASRock Z890 Taichi |

|

Intel LGA1700 (Alder, Raptor Lake) |

MSI MPG Z790 Carbon Wi-Fi |

|

Intel LGA1200 (Comet, Rocket Lake) |

Gigabyte Aorus Z490 Master |

|

AMD AM4 (Ryzen 1000, 2000, 3000, 5000) |

MSI MEG X570 Godlike |

|

Intel LGA1151 (Sky, Kaby, Coffee Lake) |

MSI MEG Z390 Godlike |

|

AMD AM3+ (Bulldozer) |

ASUS Crosshair VI Hero |

Our testing is split into three phases: applications, power/efficiency, and gaming. Our application testing is automated, but that doesn’t mean it’s completely hands-off. We verify all data manually when a test pass is done and rerun tests if there are any outliers in the data. In addition, we have a specific process for reading and ingesting this data — a process that’s as fickle as it is powerful, which will quickly break if something is too far off base.

Running a single chip through our application gauntlet can take upwards of 10 to 12 hours, and you could add several more hours on top of that if we were to run everything manually. Automating applications frees up hands for game testing, allowing multiple machines to be running around the clock.

Power and efficiency testing is also automated, though the approach is different. Instead of relying on software measurements, we use the ElmorLabs PMD2. Both the 24-pin ATX and 8-pin EPS connectors go into the PMD2, and come out the other side to connect to the motherboard. This gives us an accurate measurement of the power actually feeding into the board, rather than leaning on sensors. We still log sensor activity for comparison’s sake, but our power measurements (and resulting efficiency calculations) come from hardware monitoring.

Finally, games. As we do with GPUs, our approach with game testing CPUs is “eyes on monitor, hands on keyboard and mouse.” Although we use a few canned benchmarks, our goal with games is to capture what you can expect while playing. That means playing the damn game. We use the same scenes and follow the same paths to get repeatable results, but we are doing the main gameplay activity, not staring at skyboxes. We do this across 17 titles.

How we chose the apps for CPU testing

We test a lot of apps. After a pass, we’re left with somewhere around 200 data points per chip, and that’s for good reason. Although some of the tests we run are redundant — or, rather, test the same type of workload — we include such a broad range to get an accurate overall view of performance, lest a single rogue workload sway our results.

The test suite is broad, but the workloads break down into broad categories:

- Office productivity

- Rendering

- Encoding

- Compression and decompression

- Code compilation

- Web applications

- AI inference

- Memory/cache

- Encryption

- Data science

Not all of our benchmarks hit neatly into one of these categories. Geekbench 6 touches on every category, for instance, but doesn’t offer the level of specificity we require. On the other hand, calculating Pi with Y-Cruncher isn’t a direct data science workload like NAMD, but it offers a unique data point for data science and AVX-512 instructions.

Here are the tests we’re running, as well as a bit about why we include them.

|

Application |

Benchmarks |

Why it’s here |

|

7-Zip |

Compression, decompression |

A ubiquitous file compression/decompression tool. |

|

LAME |

nT, sT |

A well-known MP3 encoder. |

|

Corona |

— |

A ray-traced rendering engine. |

|

Y-Cruncher BBP |

nT, sT |

An engine for calculating Pi using Bailey-Borwein-Plouffe digit extraction, accelerated by AVX-512. |

|

Cinebench 2024, 2026 |

nT, sT |

A benchmark for Maxon’s Redshift rendering engine. |

|

LuxCoreRender |

— |

An open-source, physically based renderer. |

|

POV-Ray |

nT, sT |

A ray-traced rendering engine. |

|

V-Ray 6 |

— |

A ray-traced, physically based rendering plugin for design software. |

|

Geekbench 6 |

nT (overall, FP, Int), sT (overall, FP, Int), Onnx AI (overall, FP32, FP16, Int8), OpenVINO AI (overall, FP32, FP16, Int8) |

A comprehensive benchmark suite. |

|

PCMark 10 |

App startup, rendering and visualization, web browsing |

A comprehensive productivity benchmark using open-source software. |

|

Procyon Office |

Powerpoint, Word, Outlook, Excel |

A benchmark suite specifically targeting Microsoft Office. |

|

Procyon Vision |

Int, FP16, FP32 |

An AI inference suite with several models. |

|

CPU-Z |

nT, sT |

A quick, lightweight benchmark that’s often thrown around online. |

|

Webxprt4 |

— |

A browser-based benchmark with HTML5, Java, and WebAssembly-based workloads. |

|

AIDA |

ZLib, AES, SHA3, Memory, Cache |

An intense synthetic benchmark with results for compression, encryption, and memory. |

|

LinPack |

— |

A benchmark focused on floating point calculations that’s been around for decades. |

|

PugetBench |

DaVinci Resolve, Adobe Photoshop, Premiere Pro, After Effects |

A comprehensive benchmark suite using Adobe’s creative apps. |

|

Handbrake |

X264, x265, AV1, VP-9 |

A free, open-source video transcoding tool. |

|

Blender |

Junkshop, Monster, Classroom |

A popular 3D modeling tool. |

|

Phoronix |

LeelaChessZero, NAMD, lzbench, Java, JPEGXL, JPEG, WebP, RAW, TSCP, JRipper, Dav1d, Embree, SVT-AV1, Intel Open Image Denoise, OSPRay, Stockfish, asmFish, ebizzy, AVIF, LLVM, C-Ray, FLAC, WinSAT, OptCarrot, PHPBench, Firefox unpack, Tesseract, SQLite, FFTE, MyBayes, DaCapo, Renaissance, Primesieve, OSPRayStudio, OpenSSL, PyBench |

An extensive, custom suite of benchmarks covering everything from chess engines to game emulation to code compilation. |

|

SPECWorkstation 4 |

7-Zip, Autodesk Inventor, Blender, convolution, data science, Handbrake, hidden line removal, LAMMPS, LLVM CLang, LuxCoreRender, MFEM, NAMD, Octave, Onnx inference, OpenFOAM, options pricing, Poisson, Python 3, Rodinia CFD, Rodinia Life Sciences, SRMP, viewport graphics, WPCstorage |

An industry-stand benchmarking suite that covers a wide range of workloads. |

Some of our tests overlap. Some are more relevant than others for consumer CPUs, and others are only relevant for specific types of users. Because of that, we stay conservative when looking at multi-threaded and single-threaded performance overall. The geomeans we use in reviews and for the purposes of our CPU hierarchy only include a small subset of the tests we run so that we’re not overly representing workloads that might not be relevant to everyone.

For multi-threaded performance, we take results from Cinebench nT, POV-Ray nT, Vray, Blender, and Handbrake. For single-threaded performance, we use LAME sT, Cinebench sT, POV-Ray sT, and WebXRT 4. Of course there are other workloads in our suite that are lightly or heavily threaded, but we lean on these tests for geomeans due to how strictly they stick to “multi” or “single” definitions.

Most workloads are not strictly single-threaded or multi-threaded; they live somewhere in between. In the case of something like Adobe Premiere Pro, things get even more complicated. Encoding may be heavily-threaded, but timeline scrubbing is lightly-threaded. That’s why we segment many of these tests out.

Of course, in Bench, you’ll be able to browse all of our results and compare them how you wish. In individual reviews, we may not highlight or comment on every test (we often don’t, as a matter of fact), but every test is still run for every chip to give us a complete data set.

In addition to applications, we have power and efficiency tests. Some of the tests are borrowed from the main application suite, while others are used just for power testing. Here are the power tests we run and why they’re included.

|

Application |

Benchmarks |

Why it’s here |

|

Handbrake |

x265, x264, VP9, AV1 |

An all-out multi-core workload for measuring peak power during normal usage. |

|

Y-Cruncher |

nT, sT |

A look at the impact of AVX power across both a single- and multi-threaded workload. |

|

Blender |

Monster, Junkshop, Classroom |

Another all-out multi-core workload, this time looking at rendering tasks. |

|

Cinebench |

nT |

A solid foundation for comparing power consumption and efficiency with highly repeatable results. |

|

Prime95 |

AVX and non-AVX |

Theroretical peak power consumtpion |

|

Idle |

— |

What the CPU sips when nothing’s going on. |

|

Active Idle |

— |

A small workload with three browser tabs and a YouTube video playing to better measure a low intensity workload that isn’t “true” idle. |

As mentioned, we gather power numbers with the PMD2 to get an accurate view of what’s actually being passed along to the motherboard. We still use software monitoring, though only as a gut check when looking at the final results we’ll present.

How we chose the games for CPU testing

Our test suite currently includes 17 games spread across close to a decade of releases, though with a heavy bias toward new releases. Choosing a gaming test suite for CPUs is complicated, especially in an era of high-fidelity upscaling and frame generation techniques that change the performance equation. That’s in addition to the advent of ray tracing and path tracing effects, which, in most systems, puts a heavier burden on the GPU.

Although testing dozens upon dozens of games would be great, we need to actually make it through several generations of hardware. Here are the guiding principles we used when making our choices:

- Repeatability: We lean heavily on custom, in-game benchmark sequences for CPU game testing. We need to see similar results from run to run so we can trust the data we’re gathering and presenting.

- Relevance: Even if some games show performance scaling more clearly than others, we only chose titles that still see high concurrent player counts on tools like SteamDB (>1,500 peak concurrents in a 24-hour period). That disqualifies games you may see benchmarked elsewhere, such as Strange Brigade or Ashes of the Singularity, both of which see around 50 peak concurrent players each day. The one exception is A Plague Tale: Requiem, which sees around 500 peak concurrents a day. We include it due to its unique Zouna engine that scales well with CPUs.

- Scalability: We want to show CPU performance in games. Although we don’t strictly go with CPU-bound titles, we include titles that lean heavier on the CPU. For instance, we use Black Myth: Wukong to test GPUs, but we use Microsoft Flight Simulator 2024 to test CPUs. These titles are still balanced against games that are heavy on the GPU, such as Doom: The Dark Ages and Cyberpunk 2077.

- Stability: Game updates can radically change performance, especially close to release, and these updates can have an outsized impact on CPU performance. We’ve seen cases of this in games like Starfield and The Last of Us Part One near launch, which are now both stable. Because of this, we excluded games that are likely to see rapid updates with major engine changes.

- DRM/Anti-Tamper Protection: Some games, particularly multiplayer games, use anti-cheat or anti-tamper software protection. Depending on the title, these tools can lock you out of accessing a game, especially after major system changes (e.g., a motherboard or CPU swap). With CPUs in particular, we’re essentially presenting the DRM with dozens of new systems for a test pass within a short window of time, which could lock us out of access to the game temporarily. That would massively slow down our testing. We don’t exclusively exclude games with copy protection — most have some protection anyway — but we can’t include games that only allow us to test a small amount of hardware at a time.

Outside of those guiding principles, we’re mainly looking for balance in our test suite. We want a good spread of engines, genres, and camera perspectives, and graphics APIs to cover as much ground as possible.

|

Game |

Engine |

API |

Canned or in-game? |

Why it’s here |

|

Counter-Strike 2 |

Source2 |

DX11 |

Canned |

One of the most popular games full-stop with frame rates often >500 fps. |

|

The Last of Us Part One |

Proprietary |

DX12 |

In-game |

A demanding linear game that still shows CPU scaling. |

|

Cyberpunk 2077 |

REDEngine |

DX12 |

In-game |

The gold standard for PC benchmarking. |

|

Starfield |

Creation Engine 2 |

DX12 |

In-game |

An open-world game with hundreds of NPCs. |

|

A Plague Tale: Requiem |

Zouna |

DX12 |

In-game |

A feast for the eyes with a unique engine. |

|

Hogwarts Legacy |

UE4 |

DX12 |

In-game |

A late UE4 title that’s still surprisingly popular. |

|

F1 24 |

EGO 4 |

DX12 |

Canned |

The quintessential racing simulator that’s highly repeatable. |

|

Spider-Man 2 |

Proprietary |

DX12 |

In-game |

A sprawling recreation of NYC that rewards fast CPUs. |

|

Baldur’s Gate 3 |

Divinity Engine 4 |

DX11 |

In-game |

A complex CRPG with innumerable possible interactions. |

|

Monster Hunter Wilds |

RE Engine |

DX12 |

Canned |

A demanding open-zone game built on Capcom’s far-reaching RE Engine. |

|

Final Fantasy XIV |

Proprietary |

DX11 |

Canned |

Still a wildly popular MMORPG. |

|

Microsoft Flight Simulator 2024 |

Zouna |

DX12 |

In-game |

The de facto flight simulator with ambitious rendering and streaming techniques. |

|

DOOM: The Dark Ages |

Id Tech 8 |

Vulkan |

Canned |

A recent Vulkan release with always-on ray tracing. |

|

Oblivion Remastered |

UE5 |

DX12 |

In-game |

A recent UE5 release with dozens of NPCs in a given location. |

|

Far Cry 6 |

Dunia 2 |

DX12 |

Canned |

One of our oldest benchmarks that can provide good comparison points across several generations. |

|

Hitman 3 |

Glacier 2 |

DX12 |

In-game |

A sandbox-style game with complex simulations. |

|

Minecraft |

Proprietary |

DX12 |

In-game |

A stress test with the maximum 96 render chunk distance. |

This is the list of games we’re testing now, with a mixture of newer additions and some of our old favorites that provide a good sanity check as we’re flipping chips. About a third of our benchmarks are canned, compared to two-thirds that are in-game. We like to include both with a heavier emphasis on in-game benchmarks, as some canned benchmarks give us more repeatable results in otherwise random games; Monster Hunter Wilds and Counter-Strike 2 are good examples of that.

This list isn’t static. It’s what we’re using for this retest for Bench, but it will evolve as time goes on and new games are released. Upcoming releases like Crimson Desert, Marathon, Death Stranding 2, Pragmata, and 007 First Light are all interesting candidates, and that only takes us to May 2026. We’ll revisit this list once we do another pass for Bench, but you may see newer titles start to show up in reviews before then.

Gaming test bench — hardware, resolution, settings, and DLSS

We use identical benches across games and apps, short of one component: the GPU. We use the RTX 2080 FE for apps, not for any processing power — no compute is actively running on the GPU — but as a glorified display output. The main reason we use it is to keep the drivers consistent across games and apps. For games, we’re using the RTX 5090 FE. We could technically use the RTX 5090 FE across games and apps, though that would lead to unnecessary power draw and somewhere in the range of $20,000 in expenses to run multiple systems at the same time. We don’t need a bunch of RTX 5090s laying around acting as paperweights if we’re not testing games.

In addition to the hardware, there are a few specific settings we change for our game benchmarking. We turn Virtualization-Based Security (VBS) off in Windows, enable Resizeable BAR, and manually disable any automatic overclocking profiles. That includes PBO for AMD and the Performance and Extreme power profiles for Intel. Although many motherboards default to these settings out of the box, they aren’t technically covered under warranty, so we manually disable them for testing.

The more interesting conversation is around resolution, game settings, and upscaling like DLSS. Frame generation isn’t really a factor here. It calls for a small amount of compute, which runs on your GPU, bypassing the traditional rendering pipeline and ignoring the CPU entirely.

Starting with game settings, we test with a combination of High or Ultra settings, depending on the game, and with ray tracing disabled. The one exception is DOOM: The Dark Ages, which has always-on ray tracing. Ray tracing is an important pillar of modern PC games, but we’re still in a time where it’s optional if you have performance overhead. As we see more games like DOOM: The Dark Ages and Alan Wake 2 with always-on ray tracing, we’ll reevaluate this position.

We test CPUs at 1080p without any upscaling assistance. That’s because 1080p performance says a lot about how a CPU performs across resolutions in a time of ubiquitous upscaling. As you read about in our investigation into CPU scaling with DLSS enabled, we can see CPU scaling all the way up to 4K with upscaling enabled. Those deltas narrow or completely disappear as the resolution climbs with native rendering, but even top-shelf hardware struggles to run the most demanding games at 4K without some upscaling assistance.

Testing across the three main 16:9 resolutions already triples game testing time, and adding DLSS or other upscalers into the mix would further multiply the testing time. It takes about 10 hours for a full pass of our test suite per chip; extending that out to 40 or 50 hours per chip just isn’t tenable right now. We’d have to massively shrink our suite of games, which we believe would give us less useful data overall.

Instead, we are focused on making our test suite as varied as possible, given the variable role your CPU plays in each individual game. Testing at native 1080p also lets us extrapolate up to higher resolutions with upscaling in the mix. We see largely similar scaling at 1440p with Quality or Balanced DLSS, and, depending on the game, even similar scaling up to 4K with Performance mode.

What’s next

Over the coming weeks, you’ll start to see our new test results show up in Bench. For some of the older CPUs we’re testing, this is the last time we plan to take a look at them in our standard benchmarking suite. We’re still keeping the last several generations in our test pool moving forward, but you might not see, for example, 6th- and 7th-gen Intel chips as we continue to evolve our testing suite over time. We’re setting the ground truth with these older chips now for historical purposes, and you’ll be able to reference those results against newer versions of our test suite to gauge relative performance deltas.

As new games are released and new versions of popular benchmarks roll out, we’ll update our suite, though not immediately. We need to understand the benchmarks we’re running before presenting data from them, especially when it comes to a database like Bench. The bar is much less high for something like a review, where we can incorporate a new workload without upsetting our established suite.

التعليقات