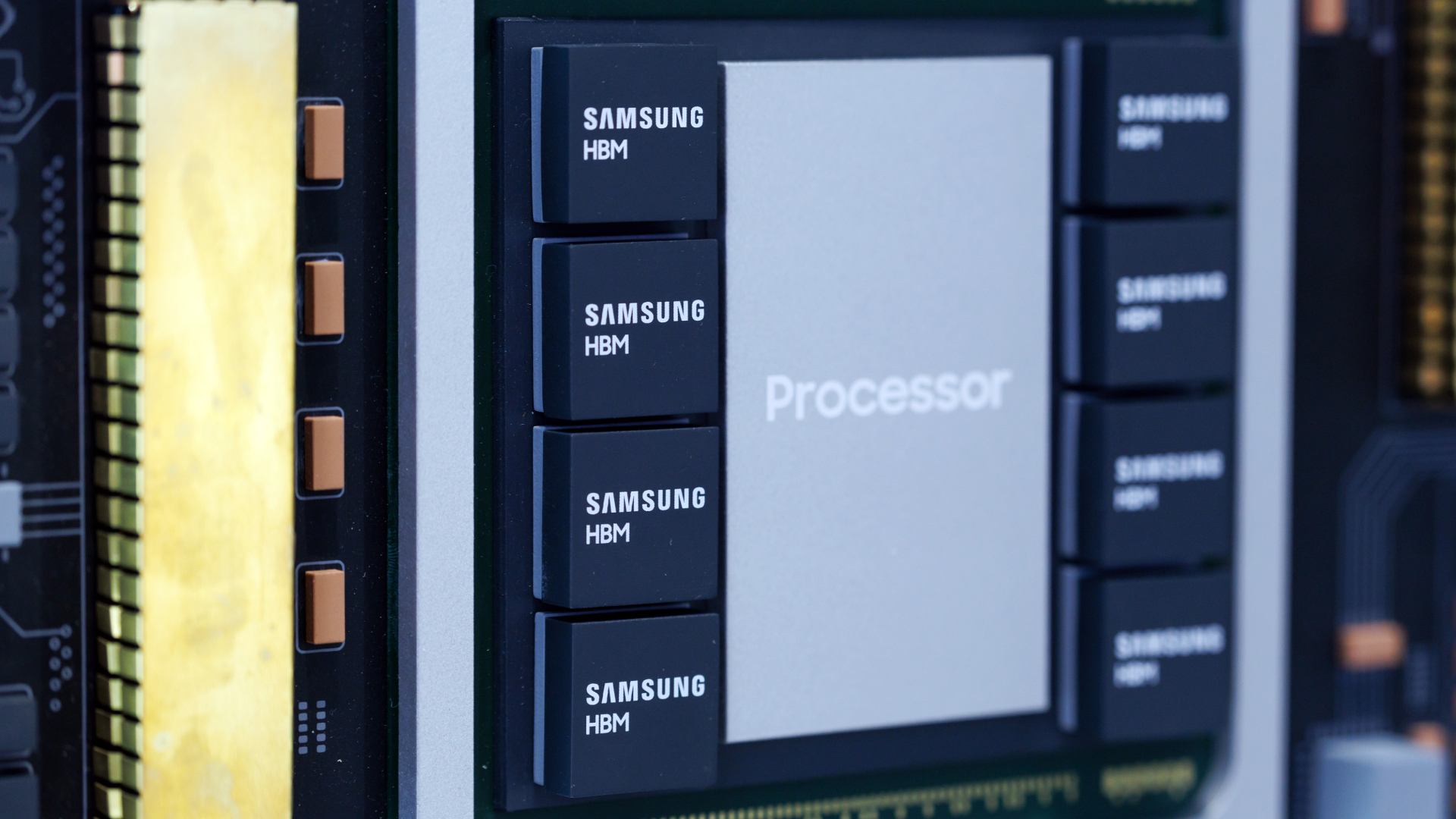

After criticizing leading chipmakers for slow capacity expansion and claiming his companies need 100 – 200 billion AI processors annually, Elon Musk last week unveiled TeraFab — a chipmaker aiming to produce logic chips, HBM4 memory, and advanced packaging under one roof. Backed by an initial ~$20 billion investment, the project targets manufacturing chips consuming 1 terawatt (1 TW) of power per year using leading-edge process technology within the next several years.

But an exhaustive analysis by Tom’s Hardware Premium reveals so many factors working against TeraFab, an effort designed primarily to produce chips in-house, that it appears highly unrealistic — at best a step towards partial vertical integration for Tesla, SpaceX, and xAI.

Article continues below

A question of capital

Money is the most obvious challenge for Elon Musk’s chip venture. To build 1 TW of AI silicon per year, Elon Musk’s TeraFab will need to process the equivalent of 22.4 million Rubin Ultra GPU wafers per year, 2.716 million Vera CPU wafers per year, and 15.824 million HBM4E wafers per year, according to estimates from premier semiconductor analysis firm Bernstein. To do so, TeraFab will need from 142 to 358 fabs, the report claims.

Bernstein’s calculations are based on a top-down conversion of compute demand into semiconductor manufacturing requirements. They start with Musk’s goal of 1 TW of annual AI compute and translate it into the number of AI racks needed, using assumptions about Nvidia’s rack power (e.g., 120 kW to 600 kW), GPUs per rack, and system architectures similar to Nvidia’s Blackwell and Rubin platforms.

They then convert those systems into chip, wafer, and fab demand using fixed assumptions for die sizes (e.g., ~825 mm² GPUs, ~800 mm² CPUs), HBM configurations, and yields. This is where Bernstein’s analysis gets a bit rough: The firm assumes the capacity of a modern fab is 50,000 Wafer Starts Per Month (which is too high for a leading-edge logic fab, and too low for a DRAM fab) and that it costs $35 billion to build (which is not enough for a 50K WSPM logic fab, but may be too high for a DRAM fab). These assumptions increase the estimated costs of the whole project; while the ballpark of several trillion seems to be correct, $5 trillion may be too high.

A modern leading-edge logic fab (or rather, phase of a fab) typically has a production capacity of around 20,000 wafer starts per month (WSPM), so this facility completes roughly 240,000 wafers per year, assuming stable operation and no major yield or downtime losses. Assuming that CPUs and GPUs are made using the same node, then to produce these logic processors (on 25.116 million wafers per year), one would need 105 leading-edge wafer fabs, provided a roughly 100% yield and no downtime.

For context, TSMC shipped 15.023 million 300-mm-equivalent wafers in 2025 — the foundry’s biggest year ever — which includes millions of outdated 200mm wafers and 300mm wafers processed on legacy process technologies.

A modern leading-edge 2nm-class logic fab costs roughly $25 billion to $35 billion. And while the economies of scale drive costs down, we are still talking about a $30 billion ballpark per fab, which means about $3.15 trillion for logic fabs alone, assuming there are near 100% yields and no production disruptions.

Keeping in mind that TeraFab will be a new kid on the block, it’s unrealistic to expect its yields will be close to 100%; assuming instead an 80% yield, more capacity will be needed, bringing the logic fab number to 126 and the total capacity investment to $3.78 trillion. To put the number into context, TSMC currently operates between 35 and 50 300mm fab modules that have been constructed across several decades.

Memory fabs are cheaper than logic fabs and have higher capacities due to the nature of the DRAM market, but we are still talking about tens of billions of dollars per fab. A modern DRAM fab usually has production capacity between 100,000 and 200,000 WSPM, which means that with a mid-point capacity of 150,000 WSPM, one will need around 9 fabs to produce 15.824 million HBM4E wafers.

TeraFab must invest well north of $4 trillion to meet Elon Musk’s goal of producing AI chips that would consume 1 terawatt of power per year.

That being said, for HBM memory, effective capacity is significantly constrained by yield, stacking, and packaging, not just wafer starts. As a result, while a DRAM fab may process hundreds of thousands of wafers per month, only a fraction of that output can be converted into high-end HBM, which is why memory may become a bottleneck. In any case, with a 70% yield for HBM, we are still talking about at least 12 fabs each costing at least $20 billion, or $240 billion in total.

Bear in mind that the figure covers front-end wafer capacity only. To put this enormous cost into context, the Big Three DRAM makers (Samsung, SK hynix, and Micron) currently operate three dozen DRAM fab modules built since the early 2000s.

2.5D and 3D packaging facilities are fairly expensive, though at $2 – $3.5 billion per phase, clearly cheaper than logic fabs. Yet keeping in mind that TeraFab will need tens, or maybe even hundreds, of advanced packaging facilities to integrate AI processors and assemble HBM stacks, the company will need to invest hundreds of billions in advanced chip packaging.

In total, it looks like TeraFab must invest well north of $4 trillion to meet Elon Musk’s goal of producing AI chips that would consume 1 terawatt of power per year, not including land acquisition, development of process technologies, software development, and ecosystem buildout. Yet Bernstein’s calculations are even more aggressive as analysts from the company believe that investments in TeraFab will be around $5 trillion.

Raising $5 trillion would be extraordinarily difficult. For context, even the largest companies like Nvidia, Apple, and Alphabet have market capitalizations of $4.34 trillion, $3.71 trillion, and $3.5 trillion at the time of writing, so Musk would need to mobilize capital exceeding the value of the world’s most valuable corporations. It’s hard to imagine private fundraising, a consortium, or even a sovereign deal of this scale.

Perhaps the only way for Elon Musk to fund this initiative is to apply at once for multi-government backing, sovereign wealth funds, and hyperscalers, as well as seeking support from capital markets. While an application for government support, per se, does not hurt, it is extremely unlikely that he will get such funding. After all, the onshoring trend in the semiconductor industry limits to one the number of governments likely willing to invest in TeraFab. Yet even the U.S. government will find it hard to invest $5 trillion, given the country’s annual budget of around $7 trillion.

Equipment and raw materials supply chain

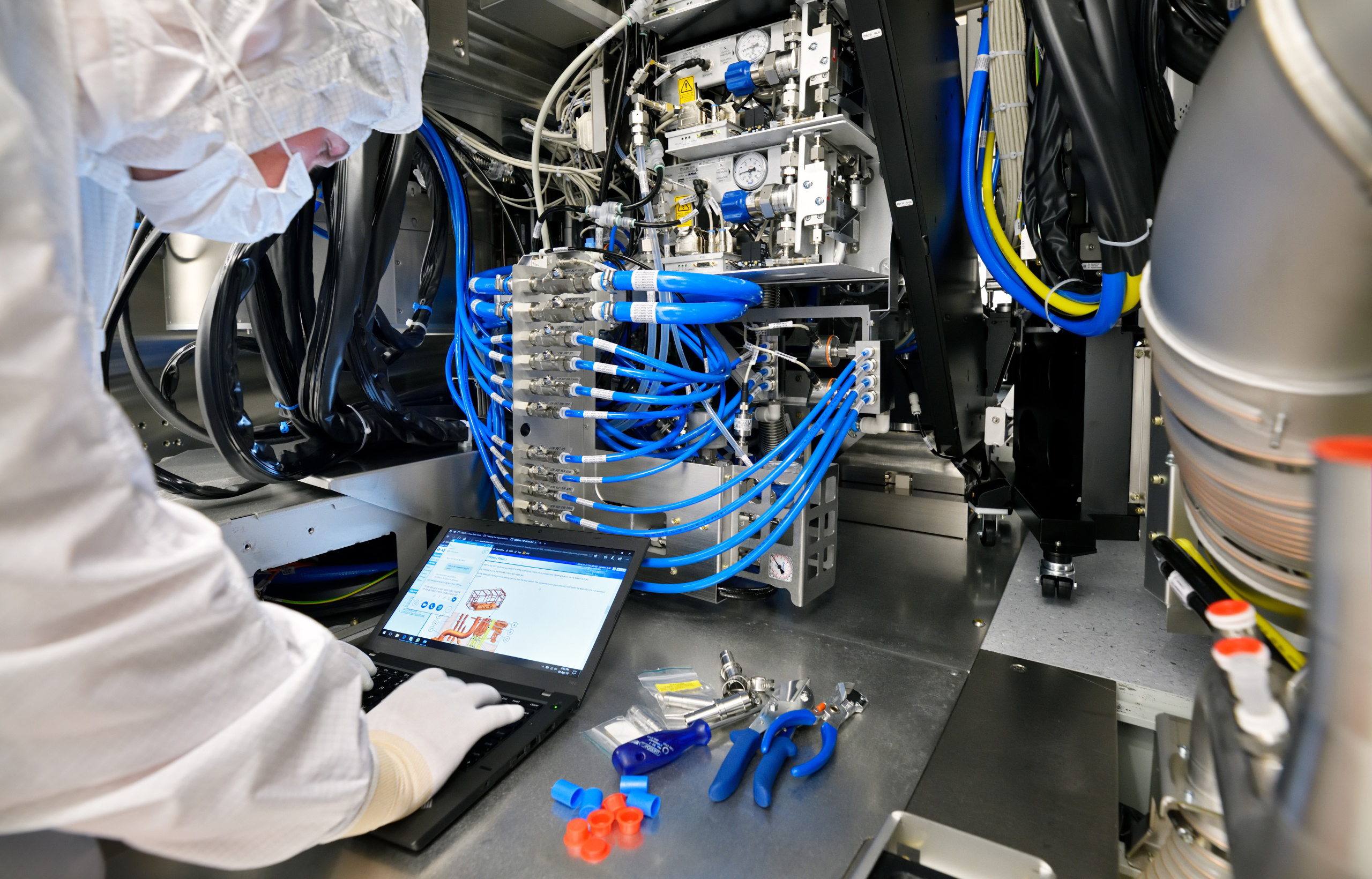

At a scale of $5 trillion in the semiconductor industry within a foreseeable timeframe, constraints would extend well beyond capital and would include limited availability of equipment, construction materials, raw materials, and a sufficiently large, skilled workforce to build, operate, and maintain TeraFab’s futuristic fabs.

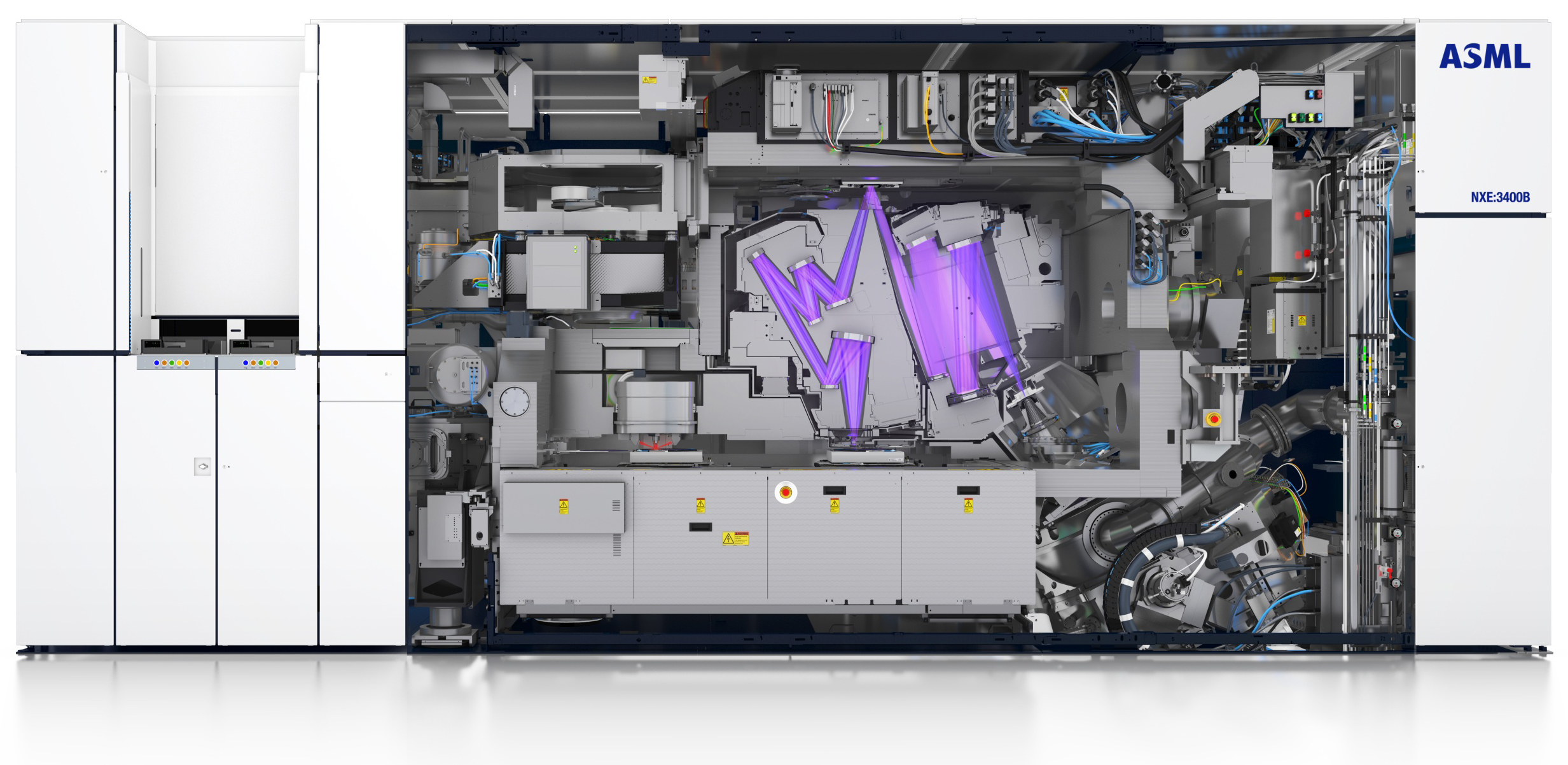

A modern 3nm-class logic fab with a capacity of 20,000–30,000 WSPM requires 80 – 100 DUV + EUV lithography scanners, hundreds of baking and developing tools, hundreds of etching tools, hundreds of deposition tools, and over 100 metrology and inspection tools, as well as hundreds of other tools that process wafers.

In addition, a fab uses thousands of various utility subsystems (pumps, generators, chemical delivery systems, specialty gas delivery systems, etc.) that make things happen. Exact fab configurations are unknown, and many tools are clustered with multiple process chambers, so the tool count understates the number of actual processing modules. DRAM fabs use fewer tools because it is easier to make memory than logic. Nonetheless, we are still talking about thousands of litho, deposition, metrology, and inspection tools per fab.

Since leading-edge logic process technologies are particularly lithography-intensive (even though some EUV multi-patterning sequences may be substituted by machines like Applied Materials’ Centura Sculpta), let’s assume that a TeraFab logic fab will need 100 DUV + EUV lithography systems, which means that 126 of these fabs will need 12,600 lithography tools for logic. For context, ASML shipped 48 EUV and 131 ArFi DUV scanners in 2025 (for a total of 179 fabs), up from 44 EUV and 129 immersion DUV machines (173 in total) a year before.

As a result, it will take ASML 70 years at its current production rate to equip TeraFab with lithography scanners for logic production alone (not counting scanners for DRAM manufacturing), and that’s only if it exclusively supplies them to Musk’s company.

It will take ASML 70 years to equip TeraFab with lithography scanners for logic production alone.

ASML has been gradually increasing its output of EUV and ArFi DUV scanners for some time, as these machines are exclusively used for advanced nodes by companies like TSMC. That being said, ASML’s production capacity depends not only on its own production capacity, but also on the production capacity of its suppliers, as the company integrates tens or even hundreds of thousands of components into every scanner. Increasing TSMC’s output meaningfully is hard, but it is even harder to increase the output of all its suppliers. Getting 12,600 lithography tools in a short amount of time is quite literally impossible.

Also, keep in mind that ASML currently employs 44,000 people. If it needs to assemble 70X more litho systems (assuming it gets enough components), it will probably need to increase its headcount to match the scale of a Foxconn or Walmart, rather than a semiconductor tool company.

The same applies to other suppliers of wafer fabrication equipment: They can produce a limited number of tools, and their suppliers can make them a limited number of components. Therefore, getting millions of process chambers in a few years is simply impossible.

Finally, getting enough raw materials of perfect purity required for leading-edge chip production for a venture that is larger than Intel, Samsung, and TSMC combined (and we’re not even talking about memory here) will be problematic, as they will have to expand their supply chains as well. Still, it should be easier than scaling production of lithography tools.

Time is not on their side

Now that we’ve mentioned capital and supply-chain challenges for a semiconductor venture of Terafab’s scale, it’s time to talk about the thing that has driven multiple chipmakers out of business in recent decades: leading-edge process technologies.

Developing a modern, leading-edge fabrication technology requires billions of dollars and a lot of time, and while Elon Musk tends to raise enough money for his projects, time is something money cannot buy.

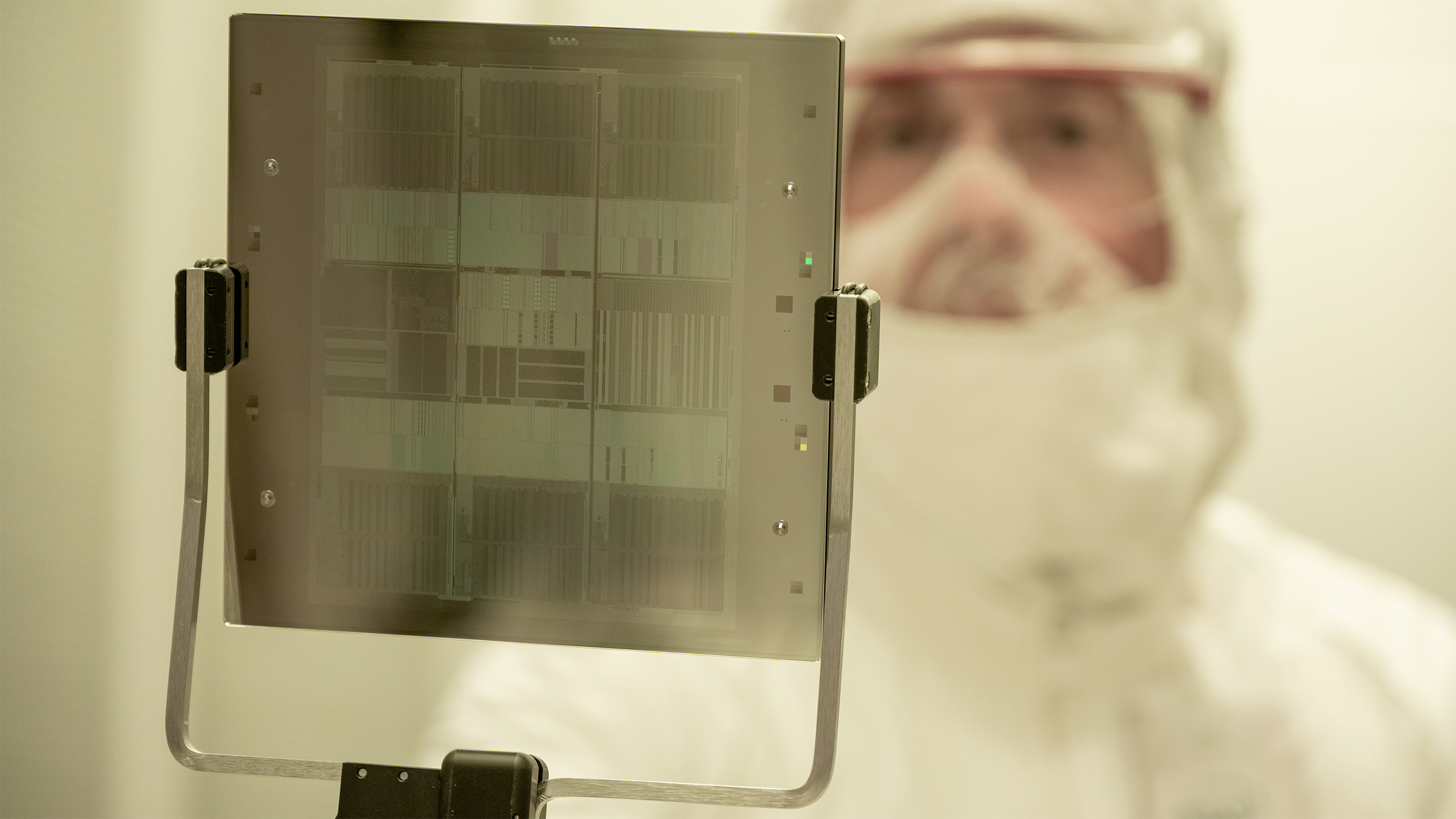

Development of a new leading-edge process technology is a 5+ year effort that begins with pathfinding, materials research, and transistor architecture exploration. Once the transistor architecture is figured out, engineers run countless simulations to model key physical effects such as doping profiles, strain engineering, and leakage behavior to tune these characteristics in a bid to achieve their design specifications for the whole node. This is arguably the only step in process technology development that can be licensed. For example, Rapidus licensed a 2nm GAA transistor design from IBM. Imec and CEA-Leti can also license some of their transistor-related technologies, but that’s about it.

Once the transistor concept is finalized (or licensed), the real work begins: Engineers must construct hundreds of tightly interdependent process steps across FEOL, MOL, and BEOL modules. These steps cover everything from transistor formation to interconnect and metallization, as well as require atomic-scale precision in deposition, etch, lithography, and annealing. Every stage involves hundreds, or even thousands, of tunable parameters, all of which must be optimized for yield, performance, power efficiency, defectivity, and long-term reliability — a process that depends heavily on accumulated expertise, rather than licensable IP.

After individual steps are stabilized, integration becomes the primary challenge. Engineers combine modules — such as gate stacks and source/drain structures — into a logical process flow and tune sequencing and thermal budgets to avoid contamination and material degradation. On paper, this sounds like an easy task, but this integration phase effectively defines the node; it must be done in a development fab, and it cannot be outsourced or licensed.

Once device performance and yield targets are achieved in the development fab, the process must be made usable for chip designers through PDKs, SPICE models, and validated standard-cell libraries. All of these take hundreds or thousands of engineers and a lot of time.

Finally, the process technology must be transferred to a high-volume manufacturing fab, which introduces another layer of complexity. Achieving stable, high yields in a production environment is a long, iterative process that requires experienced engineering teams and continuous refinement. Even with abundant funding, this stage cannot be accelerated easily.

As a result, the key question remains whether a company that starts its semiconductor efforts from scratch can realistically complete this entire cycle — from concept to mass production — within five years. Rapidus will demonstrate whether it is possible in 2027, when it intends to start trial production of chips on its 2nm-class fabrication process.

It will be the 2030s before TeraFab can actually output chips using its own manufacturing technologies. Theoretically, if Tesla, SpaceX, and xAI have limitless demand for AI processors, TeraFab could potentially license process technologies from Tesla’s foundry partners, though it remains to be seen whether production nodes can indeed be licensed and integrated in a reasonable amount of time into an existing fab.

The workforce shortfall

To meet Elon Musk’s 1 TW of compute per year goal, TeraFab must operate over 150 fabs (well, fab modules, or phases) and plenty of advanced packaging facilities. These fabs and facilities must be built by people, and as TSMC discovered with its Fab 21 phase 1 in Arizona, qualified construction workers are hard to find. Finding people who will run these fabs is even harder.

A leading-edge fab employs between 4,000 and 7,000 construction workers on site at peak, depending on the scale. When we talk about the full build cycle, there are usually 10,000 or more workers involved; for example, TSMC expects 40,000 construction jobs to be created as a result of its expansion in Arizona. To complete Fab 21 phase 1, the company had to send 500 additional workers from Taiwan, perhaps because local workers were unfamiliar with TSMC’s procedures.

The 150+ fabs required by TeraFab will necessitate hundreds of thousands of construction workers, which will inevitably create a labor bottleneck, especially for highly specialized workers required for cleanroom and sub-fab systems.

Once the fabs are built, they will need employees with very specific skills. All leading-edge fabs are highly automated manufacturing facilities, but they still employ thousands of people to manage, serve, and maintain them. TSMC’s 20,000 WSPM Fab 21 phase 1 currently employs around 3,000 people (which includes plenty of management roles that will not be required for subsequent phases), whereas Intel’s 40,000 WSPM Fab 52 has created 3,000 high-tech manufacturing jobs and 3,000 tool technician jobs in the area, along with thousands of indirect jobs.

Even assuming that next-generation advanced fab modules will require just 1,500 employees, Elon Musk’s venture will need over 300,000 highly skilled people. To put the number into context, TSMC had 83,825 full-time employees serving in various capacities as of December 31, 2024. Where TeraFab can find 300,000, and whether this can be done at all, is hard to fathom.

Reality check

Elon Musk’s TeraFab aims to produce AI logic chips and HBM memory, consuming 1 TW of power per year, which requires trillions of dollars and hundreds of fabs, which is far beyond current industry capacity in terms of capital, supply-chain capabilities, and skilled workforce availability.

Beyond capital, equipment constraints are severe, as ~100 fabs would require 12,600 lithography tools, while ASML shipped only 179 scanners in 2025, and there is no way it can scale up production within a reasonable timeframe.

Process technology development remains a 5+ year effort that involves hundreds of tightly integrated steps, extensive simulations, and yield optimization that cannot be licensed or accelerated easily, even with access to partners like IBM or imec.

Finally, TeraFab would require hundreds of thousands of construction workers and over 300,000 skilled employees.

Given all the capital and supply-chain limits, the project in its current form looks quite unrealistic at full scale. Yet if this is an element of partial vertical integration that will be used to make some of the chips that Tesla, SpaceX, and xAI require in-house, then why not? Perhaps Musk’s real goal is far less ambitious: success for his other ventures, rather than wholesale transformation of the entire global semiconductor market.

التعليقات