Today, we’re benchmarking and analyzing one of Nvidia’s most interesting new technologies in development: RTX Neural Texture Compression (NTC), an AI-driven technology that uses Tensor Cores to compress and decompress data, thus reducing VRAM requirements by up to 80%.

When Nvidia unveiled the RTX 50-series graphics cards, the company also announced several neural rendering technologies alongside those GPUs. These technologies improve the representation of materials, provide more efficient compression of textures, and increase the quality of indirect light through inferred path-traced rays.

Article continues below

Today, we will focus on one of these technologies in particular – RTX Neural Texture Compression. We’ll break down how NTC works, then proceed with benchmarking on a number of GPUs, and also share some insights shared by Alexey Panteleev, a Distinguished DevTech Engineer at Nvidia and NTC developer.

What is RTX Neural Texture Compression?

RTX Neural Texture Compression (NTC) is a machine learning-based method for texture compression and decompression. It can run in three different modes in DirectX 12: Inference on Load, Inference on Sample, and Inference on Feedback. In Vulkan, Inference on Feedback is not supported, so the only two available modes are Inference on Load and Inference on Sample.

The compression phase consists of the original textures being transformed into a combination of weights for a small neural network and latent features. In Inference on Sample mode, the decompression phase consists of reading the latent data and then performing an inference operation by passing it through a small Multi-Layer Perceptron (MLP) network whose weights were determined during the compression phase. Each texel is decompressed when needed. NTC is deterministic – it is not generative.

In order to reduce visual artifacts, Stochastic Texture Filtering (STF) is used to introduce randomness and to get filtered textures. Blackwell GPUs have 2x point-sampled texture filtering rate improvements, so it runs particularly fast on these graphics cards.

The aforementioned decompression technique is referred to as Inference on Sample, which is what most people think of when neural texture compression is discussed. It offers the greatest reduction in VRAM consumption, but it may also be impractical for some GPUs due to its performance cost. Luckily, there are solutions for lower-end hardware, too.

Inference on Load decompresses NTC textures during game or map load, and transcodes them into block-compressed formats (BCn) at the same time. The decompression is done entirely on the GPU. In practice, this preserves performance at the same level as block-compressed textures, so there is no performance penalty like there is with Inference on Sample. It also benefits from a significant reduction in the texture footprint on disk and reduced PCIe traffic. The downside is that it does not provide a reduction in VRAM usage compared to block-compressed textures.

Inference on Feedback uses Sampler Feedback and decompresses only the set of texture tiles that are required to render the current view. This mode offers a compromise between the previous two modes. It provides a large reduction in VRAM usage, albeit not at the same level as Inference on Sample. This is because Sampler Feedback requires additional heap memory allocation. Its performance is typically somewhere in between Inference on Load and Inference on Sample.

Thanks to Cooperative Vector extensions for Vulkan and Direct3D 12, pixel shaders are able to leverage hardware acceleration via AI acceleration units in modern GPUs (Nvidia Tensor Cores, AMD AI Accelerators, or Intel XMX engines.) This allows NTC to take advantage of this hardware acceleration for a significant improvement in inference throughput.

Why Neural Texture Compression?

Neural Texture Compression achieves higher compression ratios than other formats like BCn. It also supports materials with high channel counts – it operates on up to 16 channels at once. Block compression operates on just 1-4 channel images.

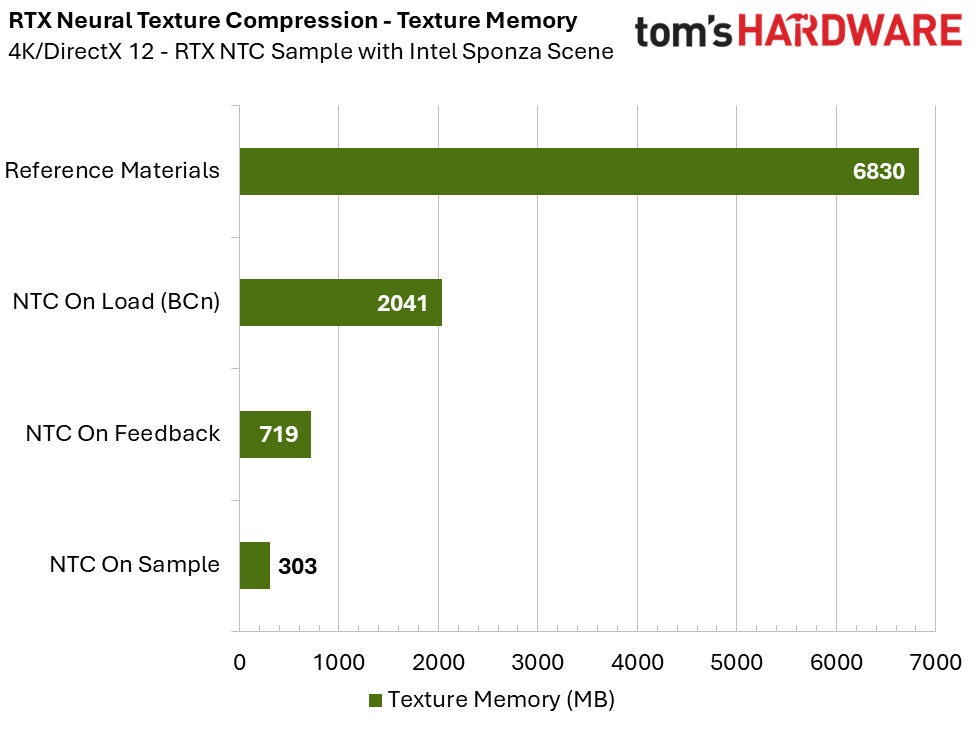

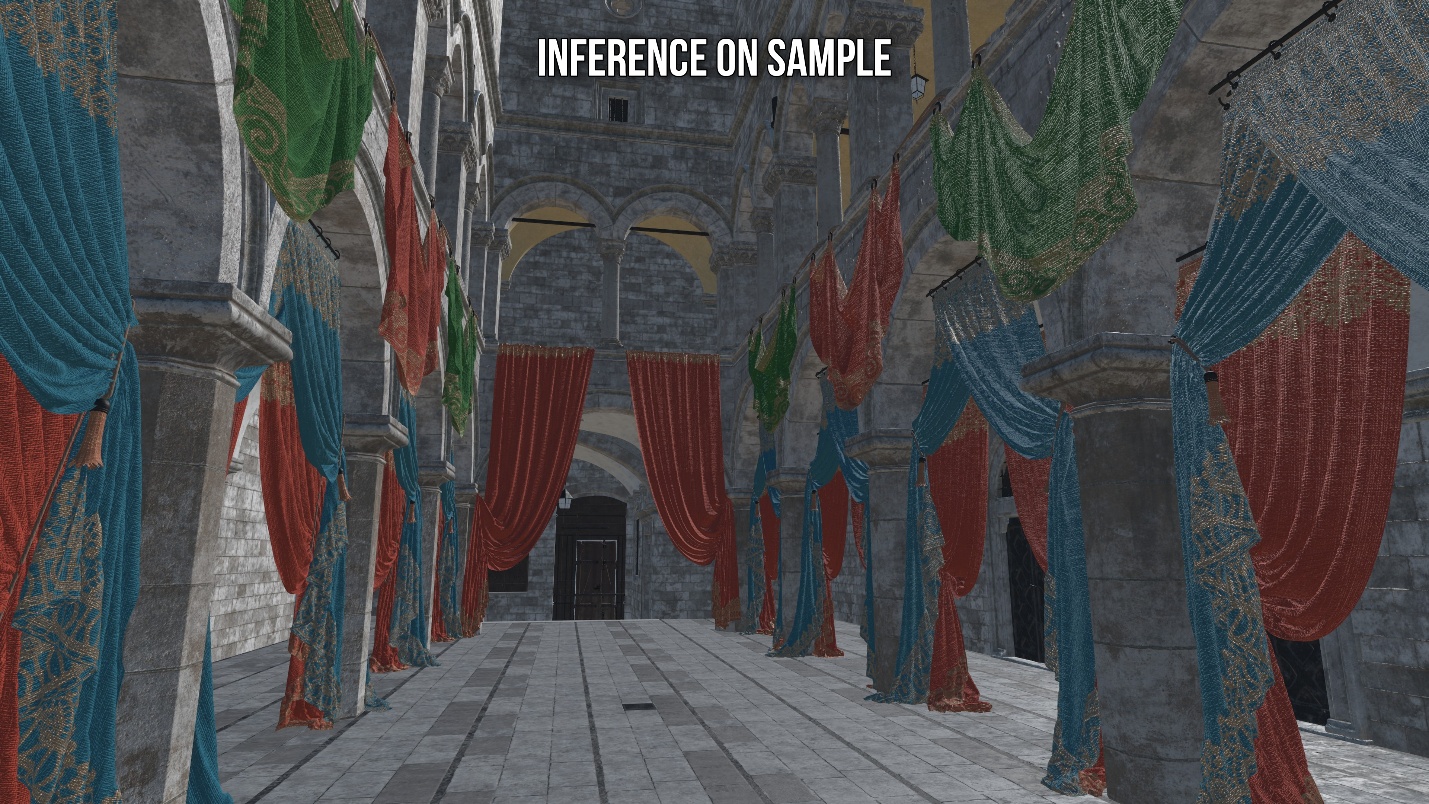

The data below is taken while running the RTX Neural Texture Compression sample on GitHub with the Intel Sponza base scene and Colorful Curtains package (reference our test system details further below for setup particulars).

Compared to NTC Inference on Load, which transcodes textures into a block-compressed format for working memory, you can see that Inference on Sample offers an enormous 85% reduction in required texture memory.

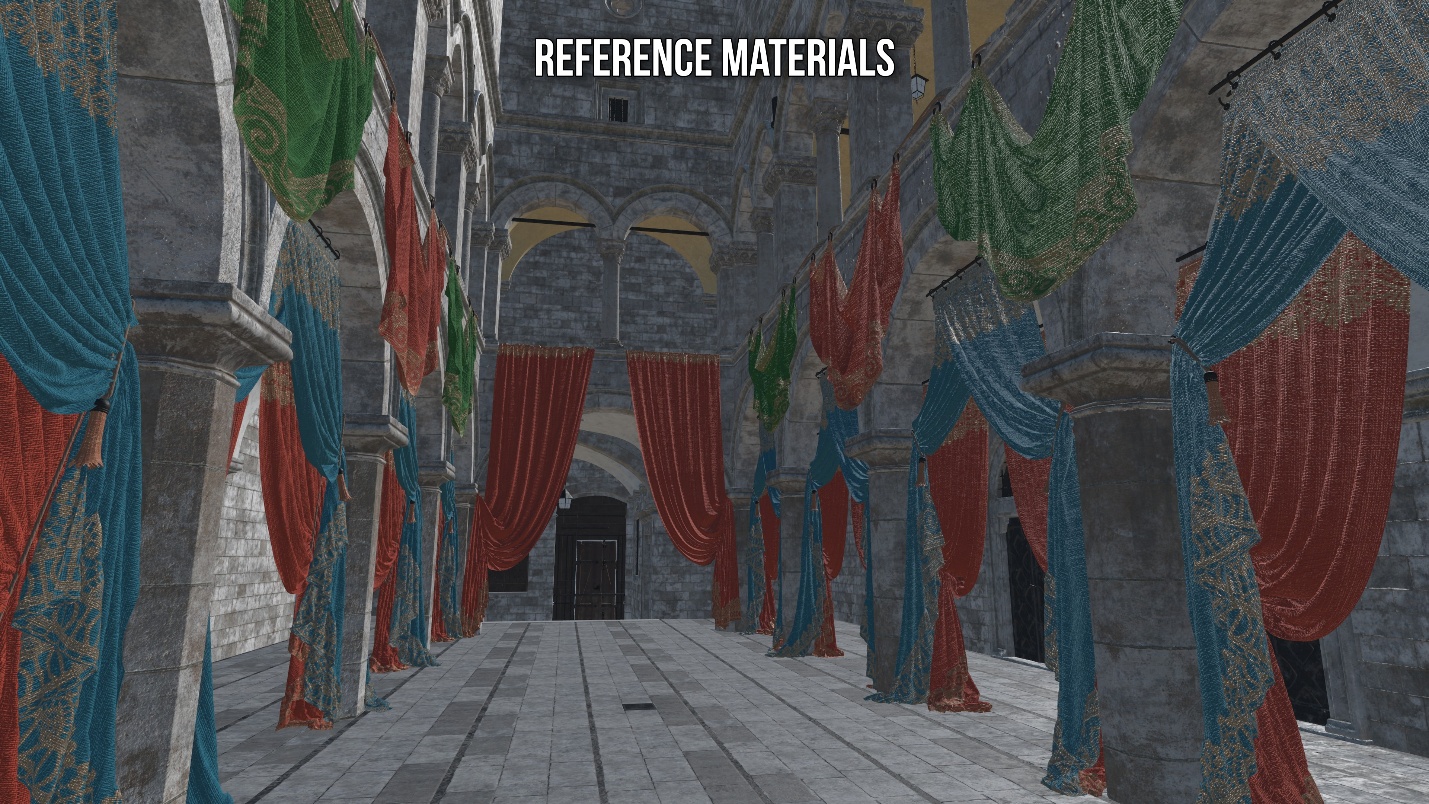

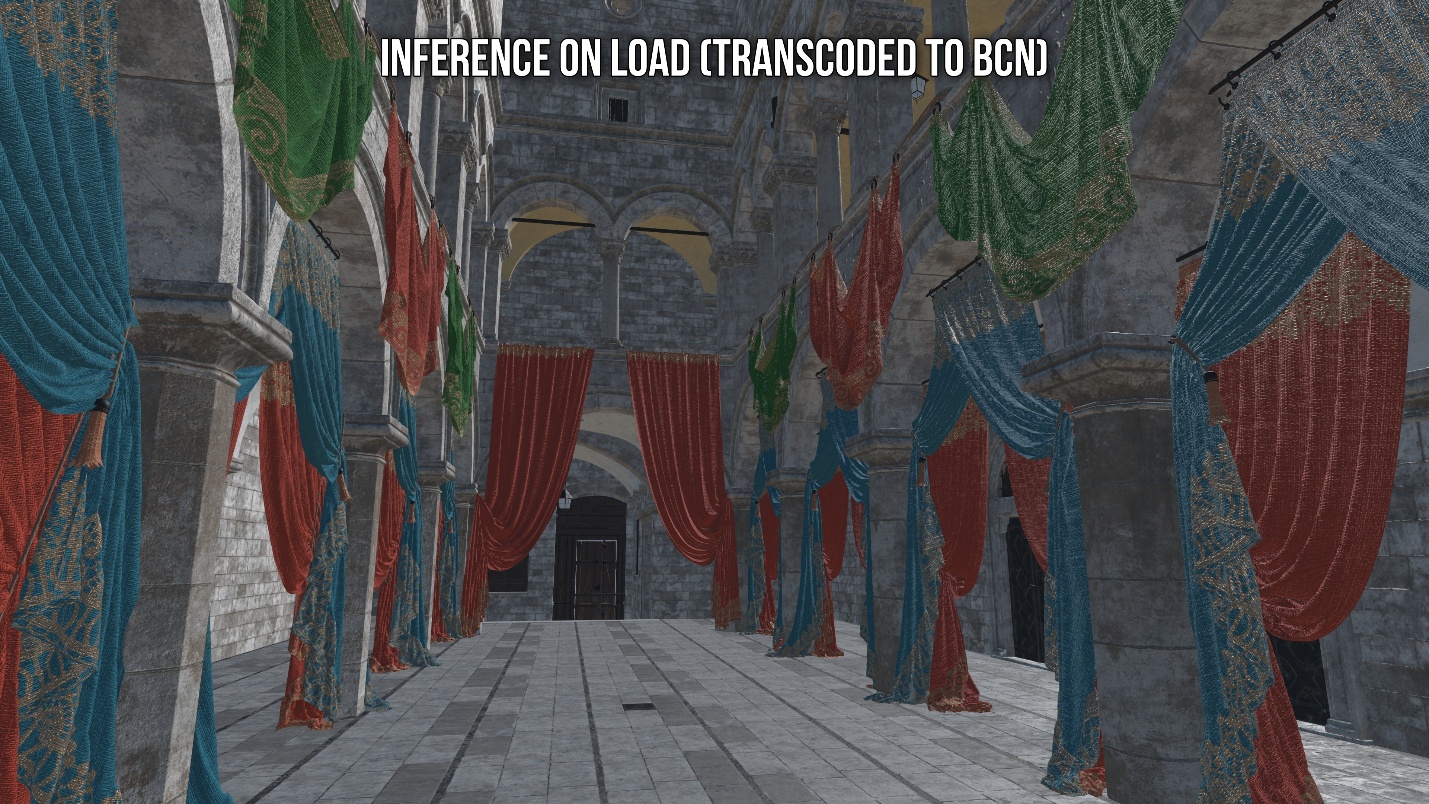

Not only does Inference on Sample provide a massive reduction in VRAM, but below you can see that it results in an image which is closer to reference than the BCn-transcoded textures. The textures in the Inference on Sample mode are almost a perfect match for the reference textures.

However, it is not without issues. The above images were taken with DLSS enabled. Stochastic Texture Filtering (STF) is used to get filtered textures in NTC by introducing randomness. As a result, enabling STF without using any sort of anti-aliasing can produce an image with a lot of noise. This noise is entirely cleaned up by DLSS. TAA mostly cleans up the image, but not entirely. Inference on Sample mode requires the use of STF, and so it cannot be disabled. As such, this mode requires the use of anti-aliasing, preferably DLSS, in order to look its best.

Last year, during a presentation on RTX Neural Texture Compression at Vulkanised 2025, Nvidia outlined that, in addition to reducing texture memory, NTC can also be used to provide drastically superior texture quality even when under the same memory constraints.

In the above scene, the same amount of VRAM is used for both BCn and NTC, yet NTC is able to preserve significantly more texture detail and visual fidelity. We’ll jump to the performance benchmarks now, but be sure to read further below for additional detals about NTC from an Nvidia technician.

How Does it Perform?

The benefits of the technology are clear, but how does it perform? We will be looking at several GPUs and how they handle NTC in the RTX Neural Texture Compression sample on GitHub.

Inference on Load transcodes the NTC textures to BCn during game or map load, so it has zero performance overhead compared to block-compression. On the other hand, Inference on Sample incurs a performance cost on all GPUs because it performs neural decoding on-the-fly during sampling. Ideally, the performance cost should be minimal for it to be practical.

The sample will be tested using the Intel Sponza base scene with the Colorful Curtains package in order to simulate a more demanding gaming workload than the simple default model that comes with the sample. However, it is important to note that while the Intel Sponza base scene is more realistic than the simple default model, the sample still has only a basic forward pass and TAA/DLSS.

A game will have many more render passes than this, and most of these passes will not be affected by NTC. As such, the relative frametime cost in an actual game may be lower than what we experience in this sample. This is also why we are measuring performance in terms of frametime here. Given that most other render passes in a game may not be affected by NTC, the number of milliseconds it adds in this sample could give us a better idea of the absolute frametime cost in an actual game.

The focus of the benchmarks will be on the resolution that is most appropriate for each GPU.

The Cooperative Vector implementation in DirectX 12 requires Microsoft DirectX 12 Agility SDK 1.717.x-preview and Nvidia Developer Driver 590.26 for Shader Model 6.9 functionality. Therefore, we use this driver for all scenarios tested throughout the article.

NTC on Feedback is only available in DirectX 12 due to the lack of a Vulkan equivalent to DirectX 12 Sampler Feedback.

Test System

- AMD Ryzen 7 9800X3D

- 64GB (2x32GB) G.Skill Flare X5 DDR5 @ 6200 MHz CL30

- Crucial T700 Gen5 SSD

- Asus ROG STRIX B850-F Gaming WiFi

- Corsair Nautilus 360 RS AIO Cooler

- HAGS enabled

- Windows 11 25H2 (Build 26200.8117)

- Nvidia Developer Driver 590.26

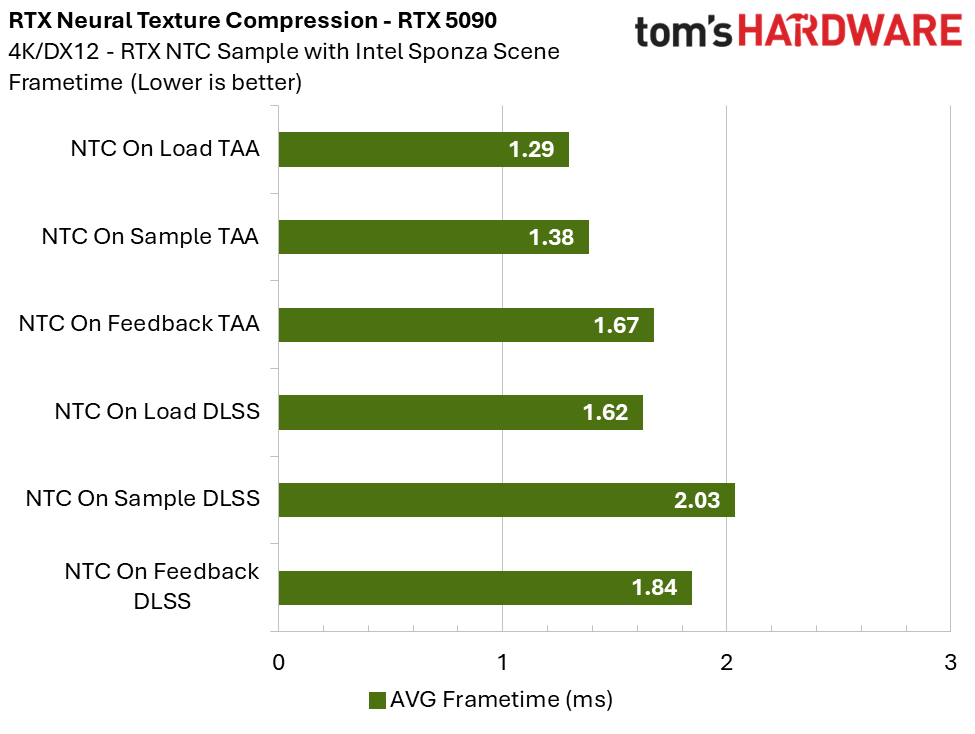

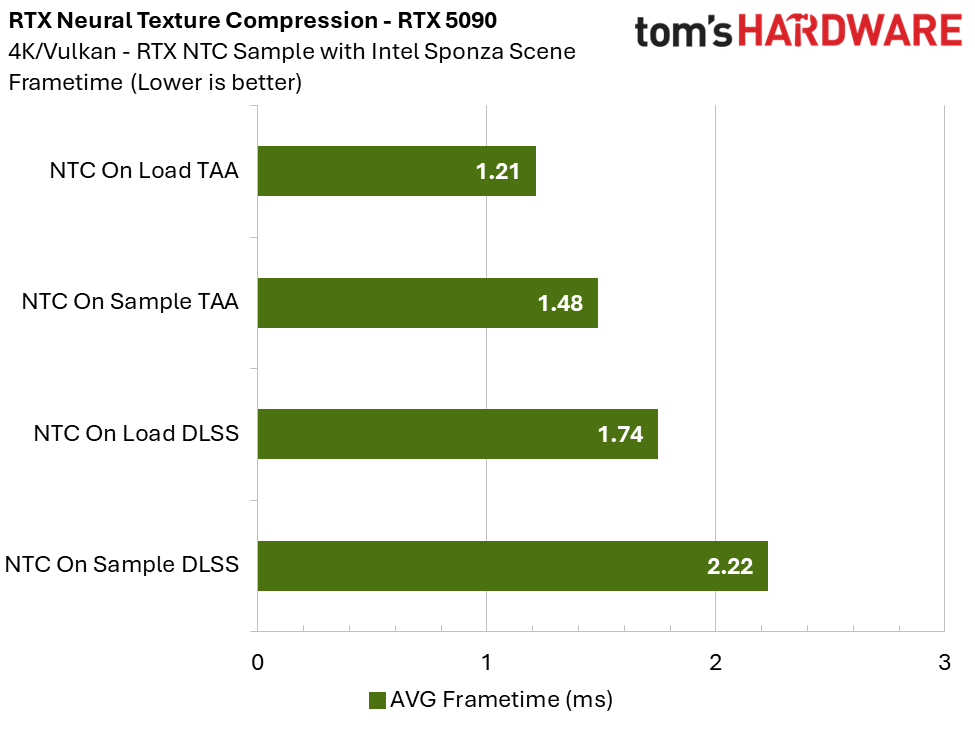

The RTX 5090 is up first, and as you can see, even at 4K, the frametime cost of Inference on Sample when using TAA is rather low when comparing it to the BC-transcoded textures in Inference on Load. Enabling DLSS incurs an additional cost as it puts more stress on the Tensor cores. However, in a real game with more render passes and a lot more happening on screen, performance should still benefit from rendering at a lower resolution from the use of DLSS.

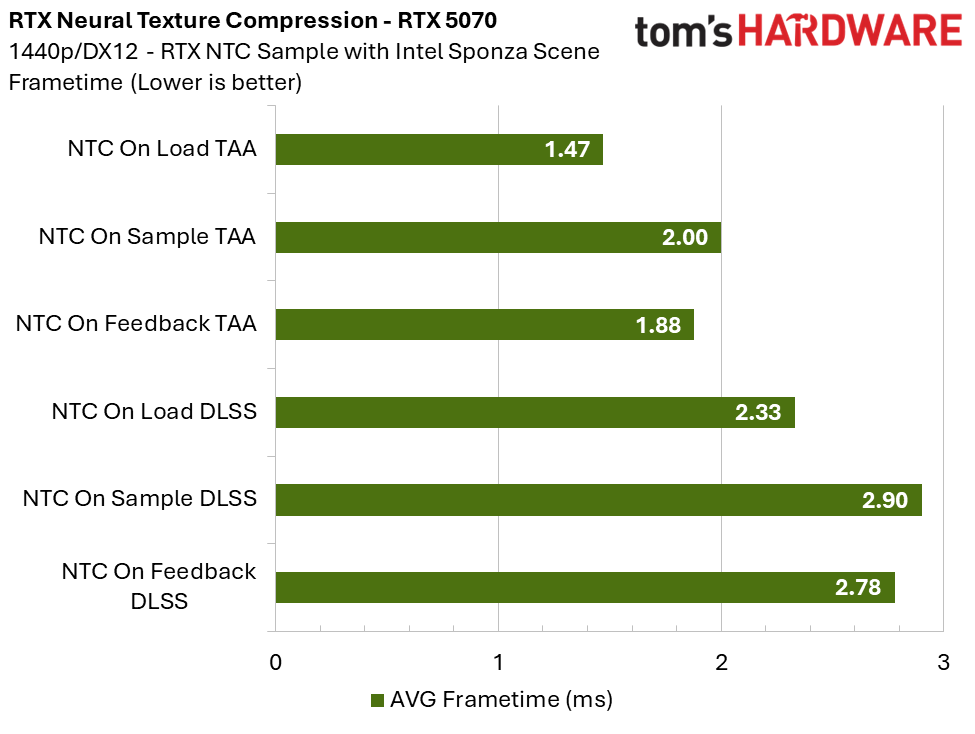

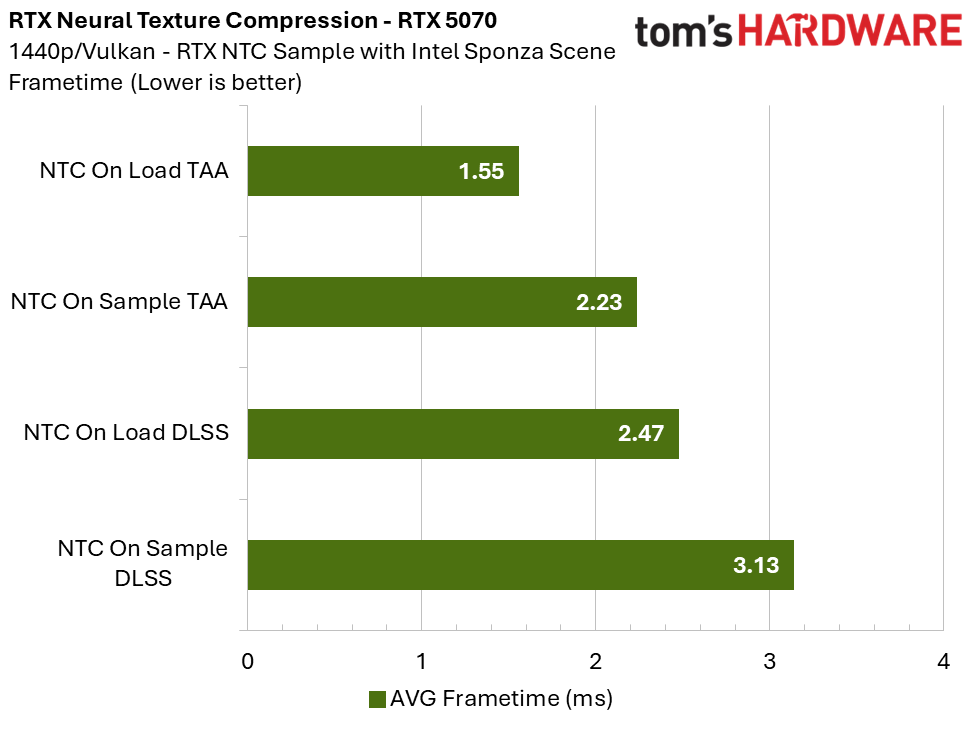

On the RTX 5070 at 1440p, the cost of the Inference on Sample mode compared to the BCn-transcoded textures is roughly between 0.50-0.70 ms, depending on scenario. We are within 1 ms. Keep in mind that real games involve many more render passes – not all of which are affected by NTC – and typically have significantly higher overall frametimes than this sample. As a result, the relative performance cost of NTC is likely to be much more acceptable in practice.

At 4K, the cost is approximately 1.20 ms.

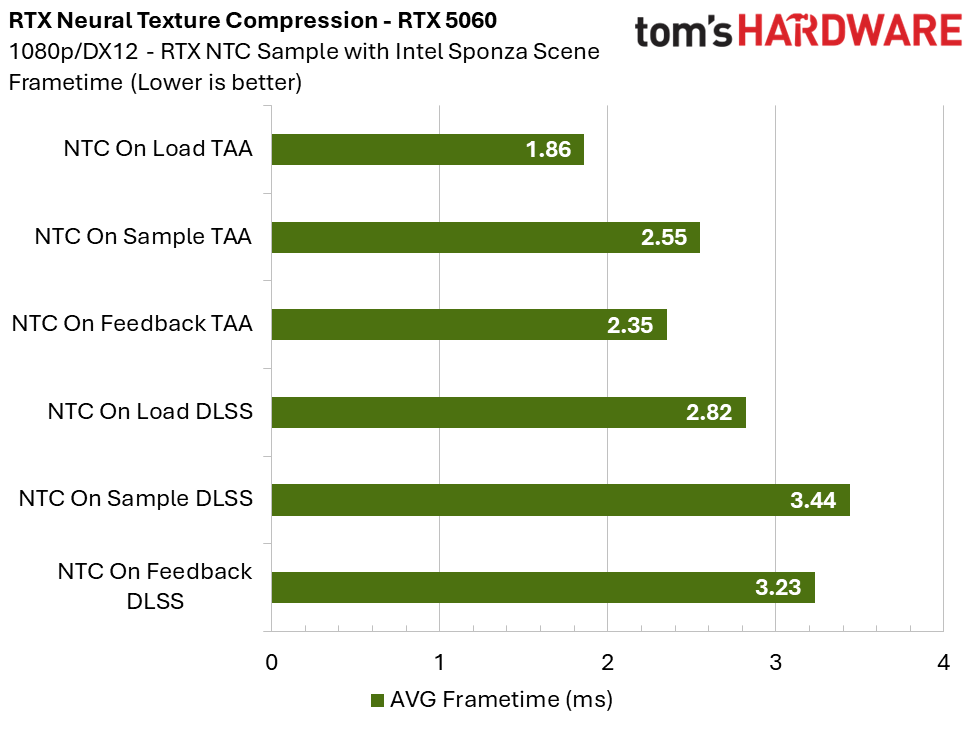

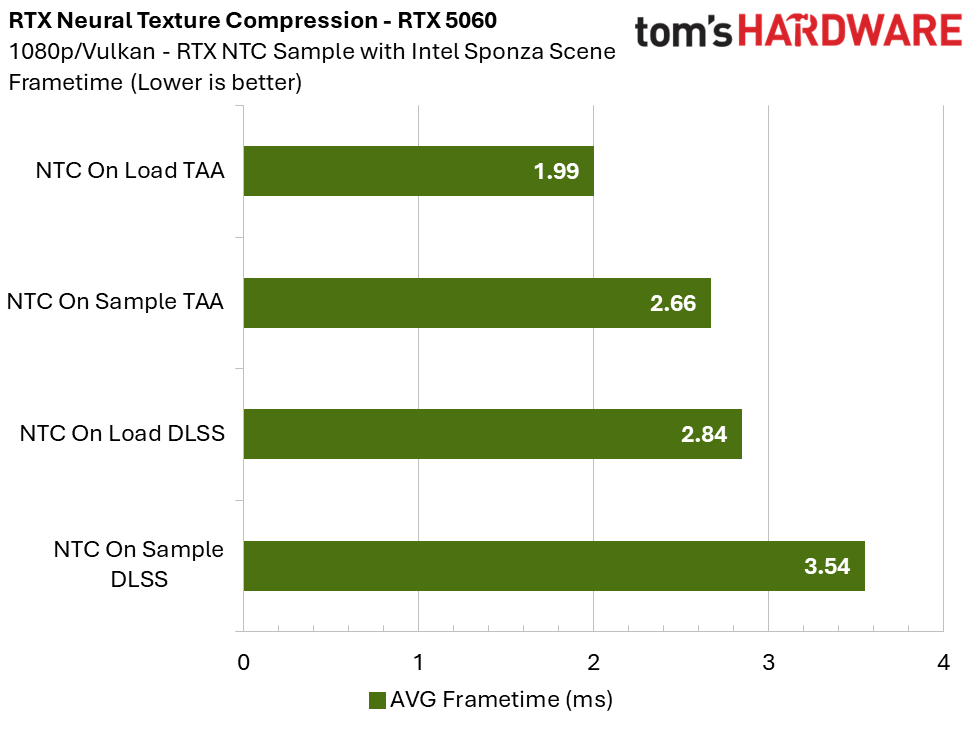

On the RTX 5060 at 1080p, the performance cost of Inference on Sample is between 0.60-0.70 ms, depending on the scenario. At a resolution that is appropriate for this GPU, we are again within 1 ms.

The 5060 does struggle at higher resolutions, though. At 1440p, the cost is over 1 ms, and at 4K, the cost approaches 2 ms, although this is to be expected for this level of GPU.

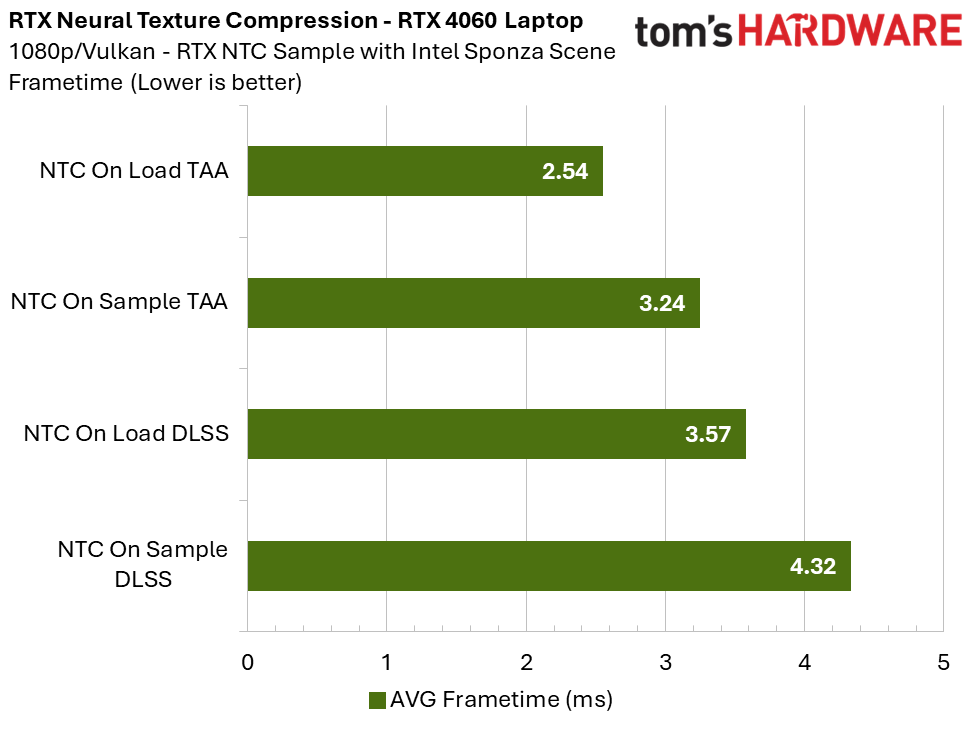

Now let’s take a look at lower end system: a laptop with an RTX 4060 Mobile GPU.

Mobile Test System

- RTX 4060 Laptop GPU

- Intel Core i7-13620H

- Gen4 SSD

- 16GB DDR5

- HAGS enabled

- Windows 11 25H2 (Build 26200.8117)

- Nvidia Developer Driver 590.26

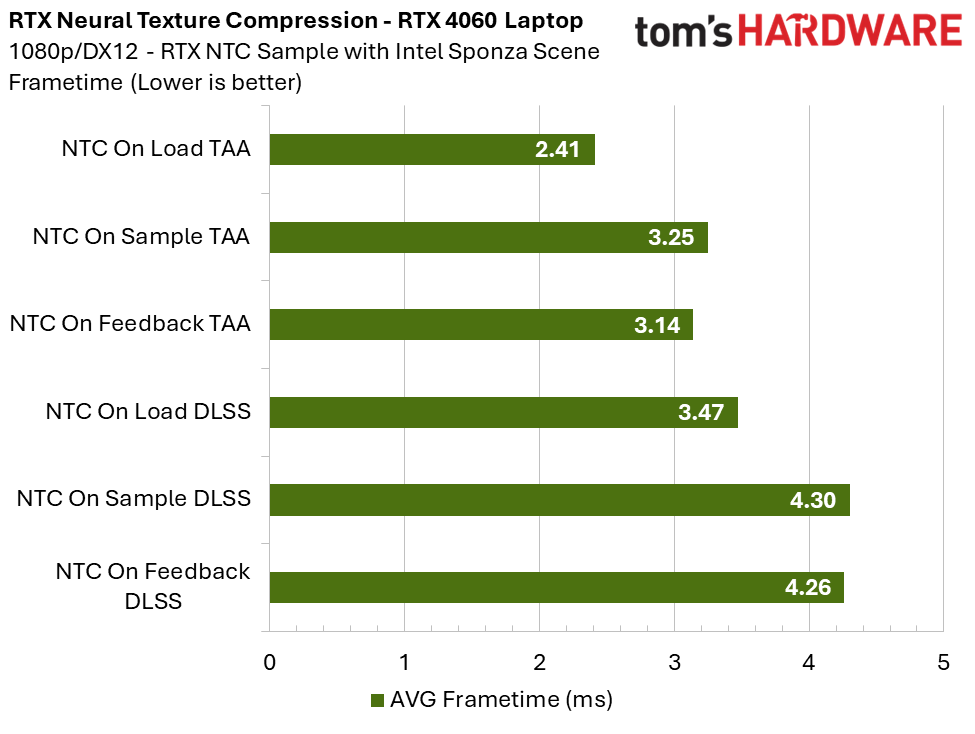

The performance cost of the Inference on Sample mode at 1080p on the 4060 Laptop GPU is roughly 0.70-0.85, depending on the scenario.

The cost for the 4060 is getting close to 1 ms. There could still be scenarios where the 4060 with its 8 GB frame buffer could benefit from Inference on Sample. If VRAM is the main limitation, then it may be worth using this mode. As Alexey Panteleev mentions below, if a game forces you to lower the texture quality setting because it would otherwise not fit into VRAM, but the game runs more than fast enough when you do that, then Inference on Sample could be a net benefit.

Insight From a Neural Texture Compression Developer at Nvidia

When I uploaded a couple of NTC videos on the Compusemble YouTube channel in October 2025, Alexey Panteleev – Distinguished DevTech Engineer at Nvidia and NTC developer – generously joined the comments section. He shared additional insight and answered viewer questions.

What GPUs are recommended for each mode?

Alexey Panteleev: Inference on Sample is only viable on the fastest GPUs, and that’s why we also provide the Inference On Load mode that transcodes to BCn and only provides disk size or download size reduction, not VRAM benefits.

Whether a GPU will be fast enough for inference on sample depends mostly on the specific implementation in a game. Like, whether they use material textures in any pass besides the G-buffer, how complex their material model is and how large the shaders are, etc. And we’re working on improving the inference efficiency.

How might NTC be implemented in games to ensure a good experience for all?

Alexey Panteleev: Our thinking is that games could ship with NTC textures and offer a mode selection, On Load/Feedback vs. On Sample, and users could choose which one to use based on the game performance on their machine. I think the rule of thumb should be – if you see a game that forces you to lower the texture quality setting because otherwise it wouldn’t fit into VRAM, but when you do that, it runs more than fast enough, then it should be a good candidate for NTC On Sample.

Another important thing – games don’t have to use NTC on all of their textures, it can be a per-texture decision. For example, if something gets an unacceptable quality loss, you could keep it as a non-NTC texture. Or if a texture is used separately from other textures in a material, such as a displacement map, it should probably be kept as a standalone non-NTC texture.

How might NTC perform in an actual game compared to how it performs in the sample?

Alexey Panteleev: On Sample mode is noticeably slower than On Load, which has zero cost at render time. However, note that a real game would have many more render passes than just the basic forward pass and TAA/DLSS that we have here, and most of them wouldn’t be affected, making the overall frame time difference not that high. The relative performance difference between On Load and On Sample within the same GPU family should be similar. If a GPU runs out of VRAM, On Load wouldn’t help at all, because it doesn’t reduce the working set size, and uploads over PCIe only happen when new textures or tiles are streamed in.

On the effect of Stochastic Texture Filtering (STF) on the image

When the NTC sample was first released last year some people noticed that the image contained a lot of noise when anti-aliasing was turned off. This noise was cleaned up entirely when using DLSS, and mostly cleaned up when using TAA but not entirely. This is due to the use of STF. When disabling STF, we no longer noticed any noise in the image with AA disabled. However, STF is required for Inference on Sample.

Alexey Panteleev: Also note that STF (Stochastic Texture Filtering) plays a major role in how things with detailed specular reflections look, like the curtains. Here, you can toggle STF on or off in the Reference and On Load modes, but not On Sample – that one requires STF and it’s always on. STF is on by default in all modes, to make the comparison more direct.

A Peek into the Future of Rendering

The sample tested here offers a fascinating glimpse into the future of graphics rendering. Neural Texture Compression (NTC) can offer extremely large compression ratios without sacrificing image quality – and in fact, appears to offer better image quality than block-compressed formats in some scenarios.

It is very impressive that the Inference on Sample mode produced slightly better image quality than the BCn transcoded textures in the Intel Sponza base scene, while at the same time reducing texture memory by 85%. The Inference on Sample mode was almost a perfect match for the reference (uncompressed) materials.

That said, some caveats remain. Stochastic Texture Filtering (STF) introduces visible noise when anti-aliasing is completely disabled, and some residual noise can still appear even when using Temporal Anti-Aliasing (TAA). NTC currently requires DLSS to look its best when using STF, which is mandatory for Inference on Sample.

The compatibility of this technology across a wide range of GPUs also stood out. Developers can compress textures using NTC, but also offer an Inference on Load mode, which transcodes the NTC textures to BCn during game or map load. While this will not shrink VRAM usage, it has zero cost to performance and will greatly lower the footprint of games on disk. The technology is also supported on AMD and Intel GPUs.

Neural Texture Compression is poised to play a crucial role in the future of real-time graphics, and it will be exciting to see how it evolves and matures over time.

التعليقات