Following Nvidia’s GTC 2026 keynote, where CEO Jensen Huang laid out the company’s Vera Rubin architecture and the Groq 3 LPU acquisition, Nvidia VP of Hyperscale and HPC Ian Buck sat down with press for a Q&A session in San Jose.

Buck addressed CPX’s delay, the LPU-GPU decode architecture, the Vera CPU’s role in the AI data center, and took questions on the Intel NVLink Fusion partnership. This is a full transcript of a session attended by Tom’s Hardware at GTC 2026, and as such, the transcript can occasionally be unclear; we have denoted any moments as such within the copy. Before diving into the transcript, it’s worth refamiliarizing yourself with Jensen Huang’s keynote from last week, which we’ve linked below.

Watch On

CPX delay and LPU decode architecture

Ian Buck: As part of bringing the LPU to market this year with Vera Rubin… we’ve pulled CPX. It’s still a good idea, but in order to dedicate our focus on… optimizing the decode with LPU this year. So we’ll be thinking about CPX more in the next generation [and] we’re going to execute on decode with LPU now, this year.

Article continues below

A couple other things I wanted to touch on. I also get a lot of questions about how we’re doing this. How does the LPU work? How does it work with the GPU? Jensen went over it briefly. This might be more technical, but it’s an important point.

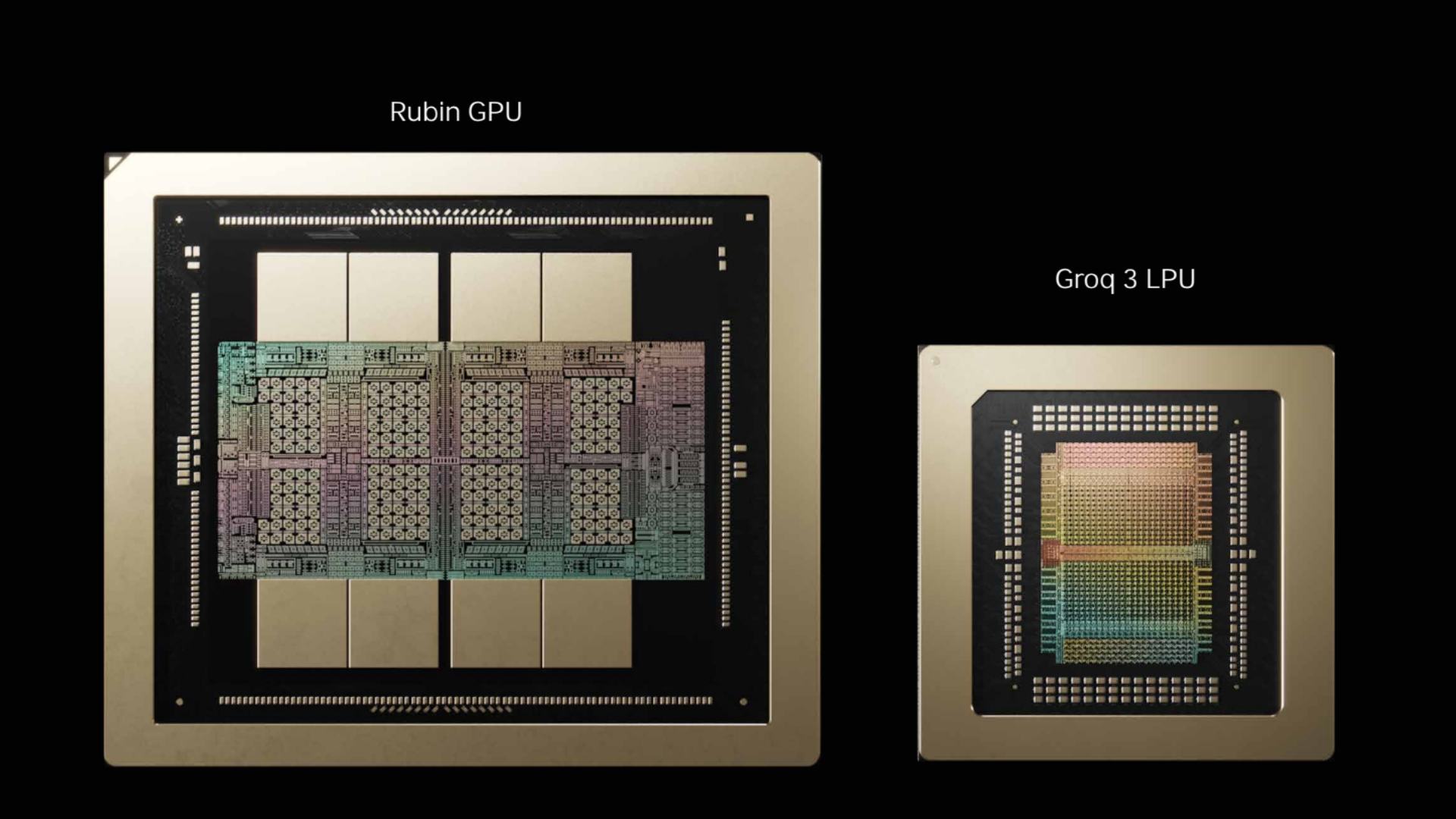

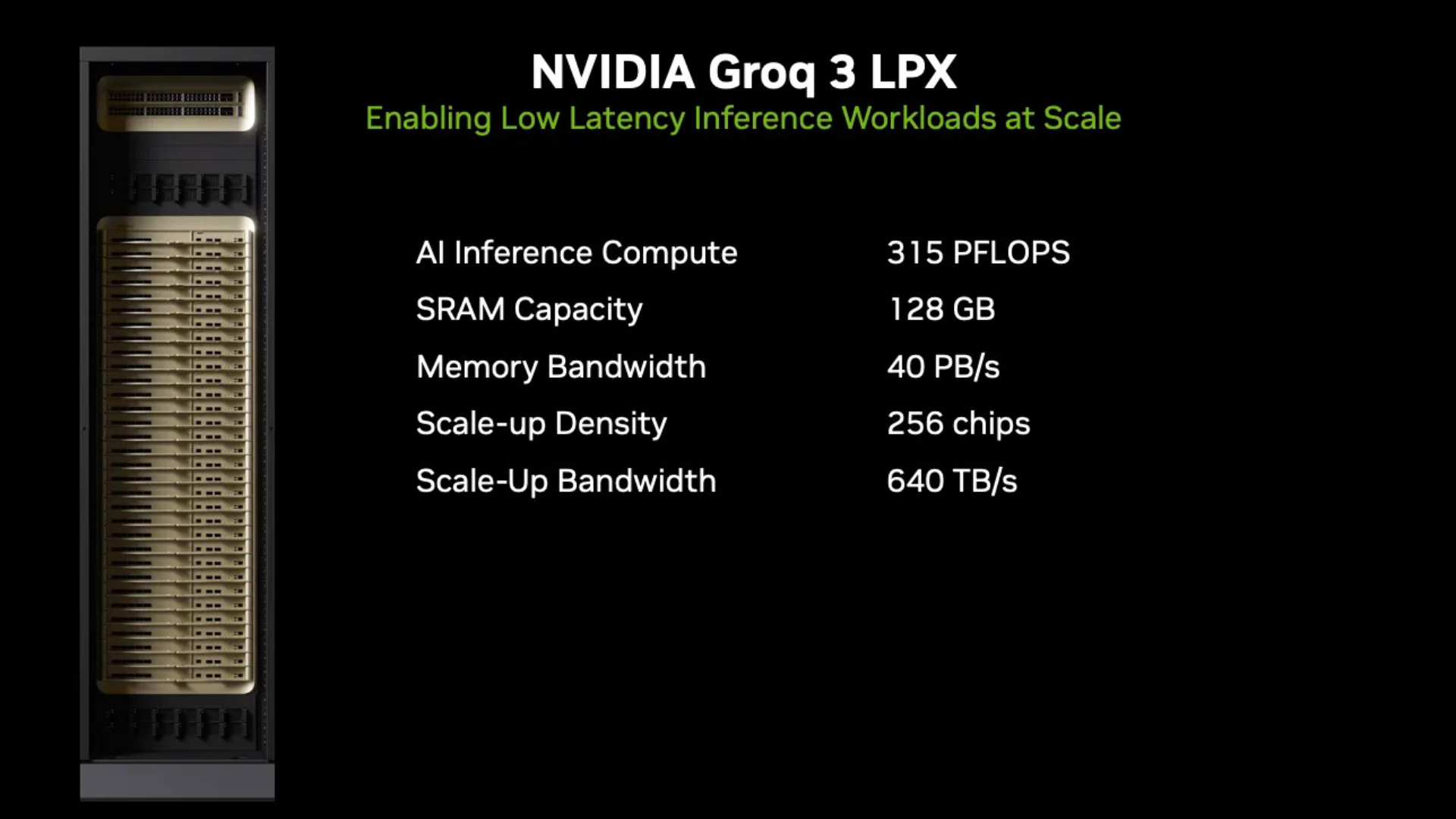

The way we do the decode is with a Groq 3 LPU LPX rack. Here we have 256 LPU chips combined with a Vera Rubin NVL72. We’re going to do the decode using Dynamo. We’ve combined the two teams, so Groq’s software team has joined our Dynamo team.

We now do not only disaggregation of separate GPUs that you pre-fill and decode, but also the decode itself is actually split between the LPU and GPU. That’s what makes the extremely fast token generation economical. We can focus and run the computations that benefit from the fast SRAM of the LPU over here in one layer, and literally the next layer, we can send the intermediate activation state over to the GPUs to do all the attention math, all the softmax, all the routing, all the KV calculations, so that only the LPUs need to have copies of the weights. All the per-query state, all the KV[cache] state, which can get quite large, can operate and stay in the HBMs.

Of course, both processors can do both things. The LPUs can do the attention math. Obviously the GPUs can do the […] as well. So you can optimize for resiliency.

Dynamo was launched a year ago. It was nicknamed the operating system of [the] AI factory. I will say it’s been a roaring success. If any of you got to go to the Dynamo meetups, it was where all of the different users of Dynamo, developers, and customers were all talking. I think we get about 100 GitHub submissions a day now, and about a third of them are coming from external [sources].

Vera CPU positioning

Ian Buck: Definitely happy to talk about Vera. I’ve actually brought with me the Vera board. This is the Vera module. It’s a reference module that we give to system partners, which they can build. It has two Vera CPUs and LPDDR5 memory. So this is a dual-socket server that will operate and run all of the tooling: PyTorch, compiling, SQL queries, as well as for HPC partners.

It is the world’s best agentic CPU. It has 88 cores designed so that you can put everything on. Turn on everything. Run them all at full speed. Compile on every core. Browse or render on every core. Python on every core. SQL on every core.

The agentic world needs a CPU that has the world’s best single-threaded performance under load, has the world’s best memory bandwidth under load, and has the best energy efficiency under load. And it turns out, while it started with a CPU that was an excellent CPU to be married with our GPUs, it makes perfect sense that all the things we needed to do to operate and run AI with our GPUs also makes a great CPU as well.

Journalist 1: Is it safe to say [Rubin] CPX is not going to come out in 2026?

Ian Buck: I’m never going to say no to how fast we can innovate. But I think we can do a lot better [with LPU decode first].

Journalist 2: Jensen was asked about the target market, target use case. And he said he was very careful about how he answered that. He didn’t want to position it as a direct drop-in replacement for x86.

Ian Buck: We only are going to build one Vera SKU […] other people are going to build x86 SKUs […] the world is not going to be served by one SKU of CPU. And that’s not our intention. The intention is that we’d like to solve a workload problem. It’s not designed to be a dollar-per-vCPU chip. The amount of technology and, frankly, just the cost to build something that solves that critical workload makes it not for that market; it’s a bad gaming chip.

But it is inspired by single-threaded performance. You may not need 88 cores, but it’s actually a unique workload because in agentic AI, it’s in the critical path for both training and running these models. When you’re training a model to code, for example, you start from a model that needs to learn how to code better. And as you’re training on Vera Rubin, you’re halfway through training, and you say: go write a program that computes the Fibonacci series, or solves a New York Times crossword puzzle.

The AI model will then try to write that program. It then needs to score how well it did. We’re not going to run that Python on the GPU. It’s a CPU job. The GPU tells the CPU to go run it. So it opens up a sandbox, boots a Linux instance, starts the Python interpreter, executes, compiles, and runs that code. And it’s got to score it — how well did it do? Lines of code, accuracy, did it crash? — very quickly. So that all those results from the training run can get back to the GPU in order to do the next iteration of training.

It is in the critical path. There are ways of overlapping. You’ll hear about off-policy, where maybe you’re training on the N-minus-one data, doing pipelining. But you can’t do too much of that because you get model drift.

So what the world is asking for, what it needs, is a really fast CPU that can generate a lot of training data while you’re training in order to make the model faster, and never let [the] GPU go idle. This might be a $30 billion, gigawatt data center of GPUs. I’m not going to skimp on the CPU side and have it sit idle, or have the potential of that model come up short because I couldn’t run the compilation for too long and had to cut it off.

And then finally, when you actually deploy AI after you’re done training, it’s not just the AI model. The GPUs are telling the CPUs what to do. They run a SQL query, or they render an image, or they go to a website — all that’s happening on the CPUs. The more tool calling that can happen in fixed power, the more efficient it can be and still maintain these interactive use cases, the more valuable those tokens are.

And lastly, as we get to the agent world, where it’s not just us doing chatbot with humans in the loop, we’re going to have agents talking to agents at machine speed. You just took humans out of the loop again. That can happen as fast as the computers can compute.

Journalist 2: So, just to be clear, your customers — your ODMs, your Dell, HPs — if they want to build a system, that’s what they’ll get?

Ian Buck: They can build it like this [referring to the reference board brought to the Q&A] or, we will ship the chip itself.

Journalist 2: So, in theory then, your partners could go off the reservation and build a gaming PC, or whatever they wanted to do with it?

Ian Buck: They could. I think they’re all highly motivated to build what Nvidia recommends [and take advantage of] the opportunities with agentic use cases.

Intel NVLink Fusion partnership

Journalist 3: Ian, what’s become of the partnership with Intel? Last year, you guys announced a partnership.

Ian Buck: We didn’t talk about it in this keynote, but it’s progressing. Fusion is a key part of that strategy. It’s an IP block plus a chiplet that allows CPUs like x86 to talk across NVLink to our GPUs, or even other accelerators. We’ve announced multiple partnerships including Intel, and that is definitely progressing. It takes a little while to integrate at the silicon level. Obviously, it’s pretty intimate integration. But I think we’ll see some more announcements about that shortly.

Journalist 4: Is that partnership going to involve implementing Nvidia IP on Intel process technology? And if so, who’s going to be doing the lifting there?

Ian Buck: There’s a separation between the manufacturing of who builds the chip or chiplets from the IP integration. The integration I talk about is the IP hooking into the fabric of the processor. This [the Vera module] is actually multiple chiplets. You’ve got multiple I/O dies, memory interface tiles, as well as the core. If you look at the right angle, you can see one, two, three, four, five, six pieces of silicon come together. So who builds which piece, in which factory, and who does the integration, that’s up to the partners. It’ll be different for each integration.

Journalist 2: We asked Jensen about that, and he said, look, our bits will be coming from TSMC, the Intel stuff will be coming from wherever they choose to get it.

Journalist 4: I think we’re trying to determine, is this a toe in the water to develop Nvidia IP on Intel process technology? I asked Jensen yesterday, and he said he’s not excited.

Ian Buck: Obviously those questions are his [Jensen’s] domain. He’s a good person to be asking about those questions.

CPX is still a good idea, says Buck

Journalist 4: Looking at the disaggregated architecture that you’ve implemented with LPX, it does strike me as a situation where it almost makes more sense to pair CPX with LPX racks rather than relying on an HBM-based product like Rubin.

Ian Buck: CPX is still a good idea. It is the opportunity to improve token throughput, to get to that next tier of agents talking to agents that need to run a 1 trillion, 2 trillion parameter model with 400,000 to 500,000 KV input context at token rates of about 1,000 tokens per second, because there’s no human in the loop. Input tokens do impact decode speed […] so 400,000 tokens of context significantly changes the token rate.

When we talk about pivoting from CPX to LPU, that’s where the focus was. Right now there’s a limit to how many chips […] we want to do this this year. We want to do this with Vera Rubin. Just because of that effort, this will help those agentic AI frontier labs be able to take that level of intelligence to market.

The volume AI market is offline inference, non-reasoning chatbots, recommendation systems, reasoning chatbots, multimodal, deep research. This [LPX] will not add value to all of those. Everything can be served on [Vera Rubin NVL72]. But that next tier is super important as we turn the corner, and it was important to make sure we had that brought to market this year.

CPX is an optimization, it’s still a good idea [and] it would help break down the cost of the pre-fill stage, but sing these GPUs for the pre-fill portion of the workload is sufficient right now.

LPX paired with Vera Rubin

Journalist 4: If CPX is not coming until 2027, but Vera as a standalone is available sooner than that, is the Vera CPU going to be available sooner [unintelligible] will there be something that doesn’t use AI to compare it with before 2027?

Ian Buck: The LPU racks, Groq, they would run the whole model on the LPU racks alone. That capability exists. But the challenge with doing that is you had to feed not only the entire model, but all of the KV cache and all of the multiple queries on an SRAM chip that only has 500 megabytes. This [Vera Rubin GPU] has 280 gigabytes.

So as models got bigger and contexts got larger, and you just had to keep all of that state around, as well as do all that attention math, it gets costly to have that many LPUs run a trillion-parameter model with the weights plus KV cache. It didn’t need to be paired with anything. But it was very expensive. And Jensen showed that in the chart as well. You could get to 1,000 tokens per second, but the economics of doing that with that many chips just don’t work.

It has nothing to do with prefill. Pre-fill is just step one, how quickly can you get to your first token. After you’ve done that, there’s pre-fill, GPUs, or whatever you’re using in pre-fill. It doesn’t matter. Your token rate is all about the number of processors you’re using to generate every token after that. So it’s not as simple as that. If you just did [Groq 3] LPX, you would need a lot of chips because of all that context.

By combining the LPX with Vera Rubin, we don’t need all that. We just do on the LPU what it’s good at, which is basically the memory bandwidth, seven times faster than HBM. That lets the mixture-of-experts layers that are inside each expert group run here. The whole rest of the model, all the attention math, can run on the GPUs.

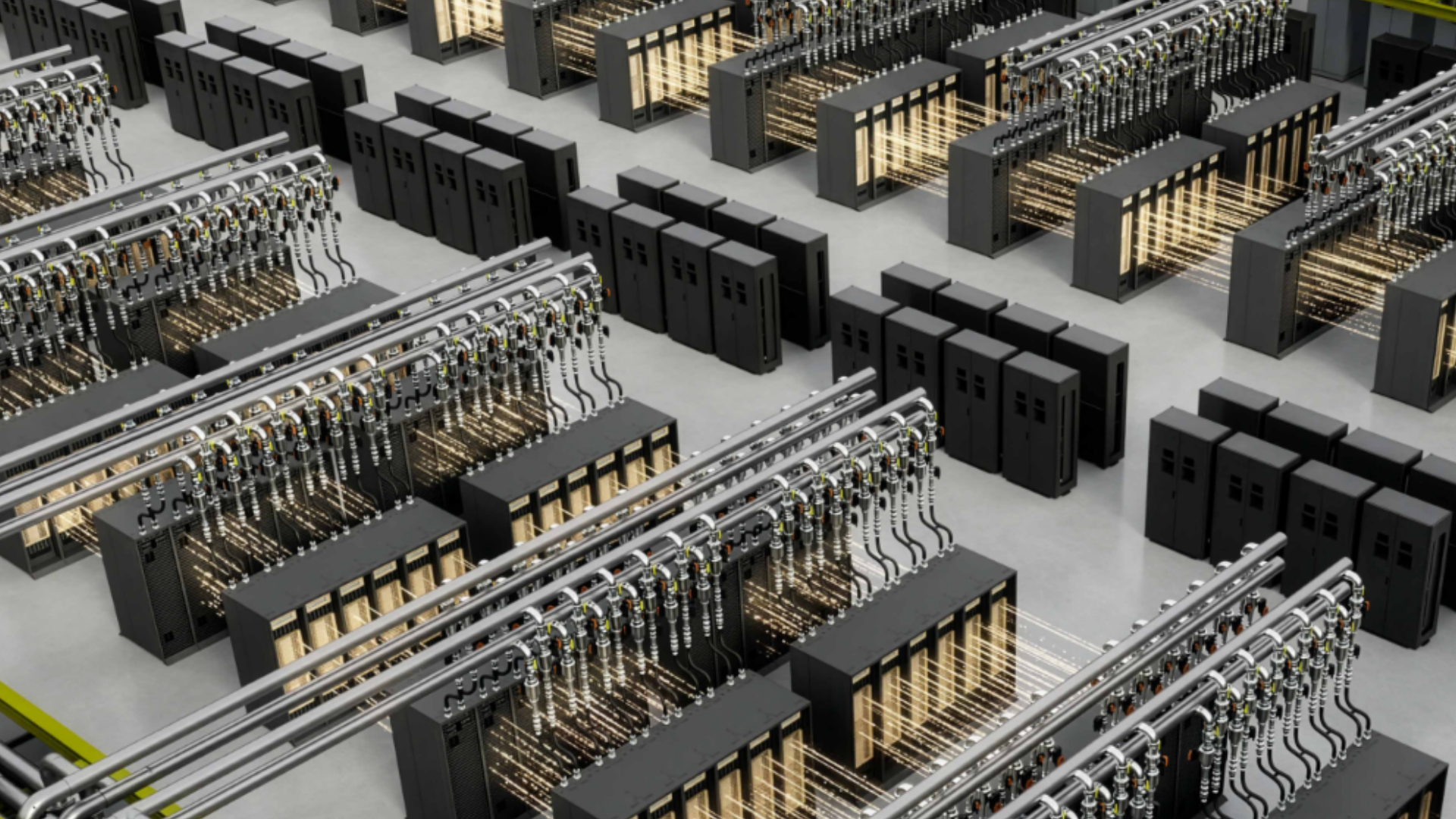

So instead of dozens of racks of LPX, we can deliver that level of performance with just two racks of LPX and one rack of Vera Rubin. And as a result, the token rate gets to 1,000 tokens per second, but the economics go back to the sweet spot. Tokens will be higher value for sure, tens or hundreds of tokens per second rather than thousands. And you can also deploy at data center scale to serve a market. Building it once, serving a few customers in a highly constrained environment is nice, but that doesn’t create a market. You have to build an architecture that, by combining LPX with Vera Rubin at one-to-one, or one-to-two, or maybe one-to-four rack ratios, can activate a market to deploy a 100-megawatt data center, a 500-megawatt, a gigawatt data center and serve those models economically.

Journalist 4: And just to be clear, all those benefits you’re talking about are on the decode side, after we’re over the pre-fill hump?

Ian Buck: Pre-fill […] it’s just the first token. How long does it take to get the first token? That’s all CPX was trying to optimize. It’s an important problem, but you can solve it with existing hardware. You can solve it with NVL72, with the older architectures. We can reduce the time to first token, and we can also solve it today by just adding a few more […] it parallelizes very easily. But it’s just the first token. [LPU decode] will increase the speed of every token after that.

LPX chip-to-chip

Journalist 3: I was wondering if you could talk a little bit more about how the LPX is going to connect to other chips, both in the Nvidia ecosystem and outside the Nvidia ecosystem? What about working with CPUs that other companies might make or that customers might procure from elsewhere?

Ian Buck: When we licensed the IP, obviously there was limited stuff that we could change. But there were some last-minute changes that we were able to make to bring it to market. So this is the version [Groq 3/LP30], which is almost largely what it was. We’re still using the chip-to-chip signaling that was already there.

Journalist 4: So there’s no Nvidia NVLink chip-to-chip on it yet.

Ian Buck: Not yet. As the roadmap shows, it’s coming with LP40 in the next generation. That’s when we’ll add the NVLink interconnect. On the next version, we’ll also rev the compute side, and we’ll add FP4 capabilities and all the Tensor Core math stuff that we have in our GPUs.

Scale-up rack-to-rack

Journalist 1: Can you walk us through how scale-up rack-to-rack works?

Ian Buck: [Pointing to the Vera Rubin module] This is a Vera Rubin. I have two Rubin GPUs here, one Vera CPU. These are the NVLink connections. This is purposely that close. This stuff is flying. The bandwidth in this direction [NVLink] is between 10x to 20x more, in terabytes per second, than in this direction [PCIe]. Here, we just use the same connector, but we have multiple lanes of PCIe connected to networking, multiple NICs, storage planes, or other systems.

The significance between the amount of bandwidth [intelligible] this way… you need that much bandwidth to have all GPUs within one rack really operating as one. The main use of scale-up is parallelism, taking the most compute-intensive part of the computation and doing tensor parallelism across all the GPUs.

When the world went to mixture of experts, where every layer has many experts [intelligible], Kimi K2 has over 200 experts per layer but only activates eight of them. Imagine using your entire brain to do two plus two. All those experts have to talk to all those experts extremely fast. That’s why NVLink is so fast and why we have a dedicated NVSwitch, a lot of them in the rack, purposely in the middle of the racks so the signal is super fast.

We do it all in copper. You’ll see the copper cables in the back. There’s over 5,000 of them, because short-run copper is both fast and cheap. There’s no retimers. It’s also the lowest power. I don’t have to drive a retimer or a transceiver or an optical fiber. One of the real reasons we went to liquid cooling was not just to get GPU performance, but to connect as many GPUs together in copper so that we could provide scale-up without exploding the cost. You could build 72 GPUs all with fiber and that many transceivers, but it’s expensive and would consume a lot of power. A transceiver can consume significant power just slamming the laser on and off.

This generation, we’re all in copper. Jensen did talk about going beyond 72 GPUs per rack. In the overall design of the NVSwitches, we’re actually building an NVSwitch that has ports in the front. We can do a level two of NVLink and actually scale up the number of GPUs to 576. We have a prototype of that working with Grace Blackwell right now.

The models today don’t benefit from that, but the models tomorrow will. It’s a chicken-and-egg relationship between what capability we have versus what the models can do. And it’s important that we show we’re doing that, so that next-generation models can take advantage of it and design for that future where we have two layers of NVLink scale-up.

With the Kyber rack, we can densify further. We can put 144 GPUs in a single rack, again all in copper. And then we can even go a step further: with 144, we can scale to 576, and then double to 1,152. I really look forward to showing you that when we get there.

Journalist 4: Could you clarify how optical connections intersect with the roadmap? If there’s an optical-capable rack with Grace Blackwell in it, does that mean you’re going to put co-packaged optics with that generation?

Ian Buck: Rubin gets optical. And Ambulink is CPO [co-packaged optics].

Journalist 1: With LP30 only supporting FP8, are you looking at on-the-fly dequantization, or do you need to run everything in FP8?

Ian Buck: You don’t need to run everything in FP8. Today’s FP4, NVFP4, is done layer by layer, or actually block by block. When you go and look at Nvidia-optimized versions of all these models, you’ll see that some of the math is FP16, FP8, and FP4. We can mix and match.

The way the engineers do it is they explore the space and then they run both hand-coded and AI-generated kernels to try all the different combinations. We then run that on a fleet of GPUs that we’ve rented back from the clouds to explore the space to make sure it’s performant and accurate. We also test to make sure the accuracy didn’t fall off or drop to a point where it’s no longer valuable.

One big data point that’s kind of fun: in October to January, the team was optimizing specifically focused on DeepSeek and DeepSeek-like models. They got that 4x uplift in just four months. Same GPUs, all the ones that everyone’s already bought, the whole install base 4x faster.

To do that, they actually ran about 250 simulations and then about 1.2 million GPU hours. We had all sorts of ideas exploring the entire space across a massive fleet of GPUs for four months to get those results.

There is still more to come. Software is an untold story here. People like to say who’s got the faster chip, and I’m like, who’s got the better software ecosystem? That’s why it’s so hard to benchmark these things, because the whole stack end-to-end matters. How efficiently all of your different chips work together. We need six chips today, seven chips tomorrow to make all this stuff actually get performance. And the combinatorial optimization space is massive. We’ve got 400 engineers that work on that, and it came to 1.2 million GPU hours.

[Session ends]

التعليقات